AI Agent Architecture: A Comprehensive Developer's Guide

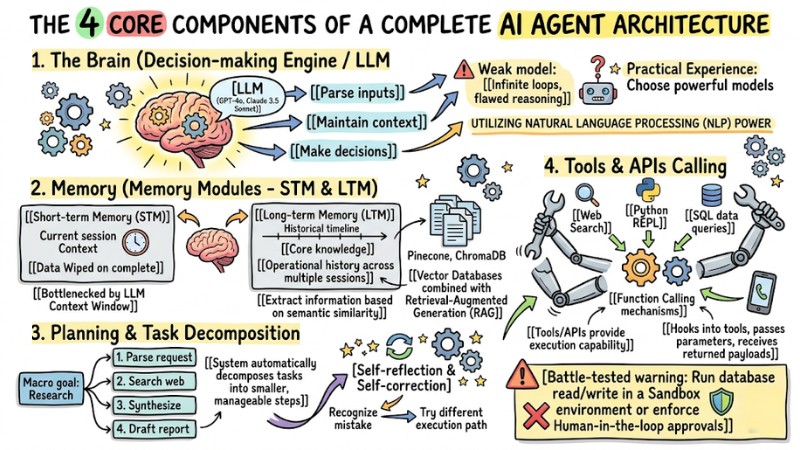

AI Agent architecture is the organization of an autonomous AI system around 4 main components: the LLM brain, memory, planning, and tools/APIs. This article is for developers, AI engineers, and project managers who want to deeply understand AI Agent architecture. Specifically, I will break down its exact nature, the 4 core components, and battle-tested operational models, helping you master the design thinking and select the right framework to automate business workflows.

Key Takeaways

- Nature of Agentic AI: You will clearly understand the difference between a large language model (traditional LLM) and a fully autonomous AI Agent.

- 4 technical pillars: Master the architecture comprising: Brain, Memory, Planning, and Tools.

- Battle-tested workflow: Visualize the task execution pipeline by analyzing the Agentic workflow.

- Reasoning models: Approach advanced design patterns like ReAct or Tree-of-Thought.

- Multi-agent architecture: Learn how a collaborative multi-agent system operates to minimize error rates.

- Framework orientation: Equip yourself with criteria for selecting a framework that fits your actual project scale.

What is an AI Agent? The Difference Between AI Agents and Traditional LLMs

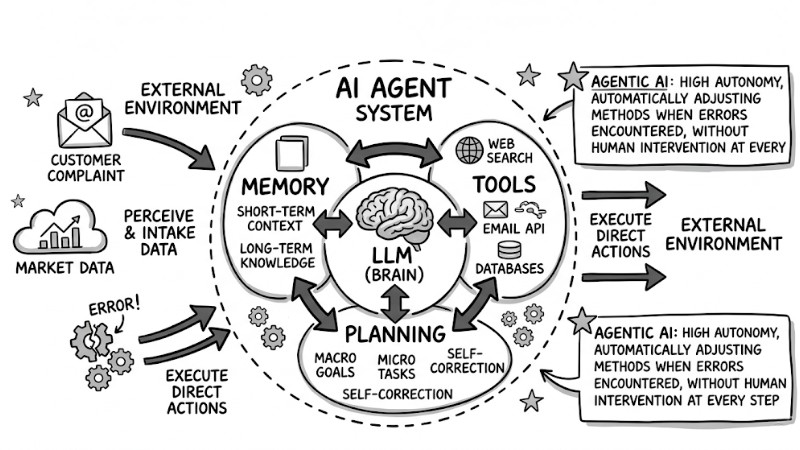

Defining AI Agents

An AI Agent is an autonomous system capable of perceiving its environment, making independent decisions, and executing specific actions to achieve predefined goals. Instead of just returning static text, Agentic AI possesses high Autonomy, automatically adjusting its methods when encountering errors without human intervention at every step.

AI Agents as Autonomous Systems

Distinguishing LLM Agent Architecture from LLMs (Large Language Models)

Many beginners often confuse ChatGPT itself for an AI Agent. In reality, the LLM merely acts as the "brain" for language processing and logical reasoning. Meanwhile, AI Agent architecture is a complete software system where source code (Python, TypeScript) wraps the LLM's API to grant it "memory" and "limbs" (tools).

From a Software Engineering perspective, the differences are clearly shown in the table below:

| Criteria | Traditional LLM (Vanilla LLM) | AI Agent (LLM Agents) |

|---|---|---|

| Output | Static responses (Text, code) based on a prompt. | Actual actions (Sending emails, querying databases). |

| Operational Mode | Zero-shot or Few-shot, answering in a single run. | Multi-step reasoning loops. |

| Tool Usage | Cannot proactively call external APIs. | Automatically selects and uses appropriate tools. |

| Autonomy | Passive, waits for humans to input the next command. | Proactively plans and self-corrects upon failure. |

| Best Use Case | Traditional LLMs: Best for drafting text and summarizing content. | AI Agents: Best for solving multi-step problems and interacting with external systems. |

The 4 Core Components of a Complete AI Agent Architecture

1. The Brain (Decision-making Engine / LLM)

The brain is the orchestration center of the entire system, utilizing the natural language processing (NLP) power of LLMs. It is responsible for parsing inputs, maintaining context, and making decisions on what to do next. Practical experience shows that you must choose models with extremely powerful logical capabilities (like GPT-4o, Claude 3.5 Sonnet) as the brain. If you use a weak model, the Agent can easily fall into infinite loops due to flawed reasoning.

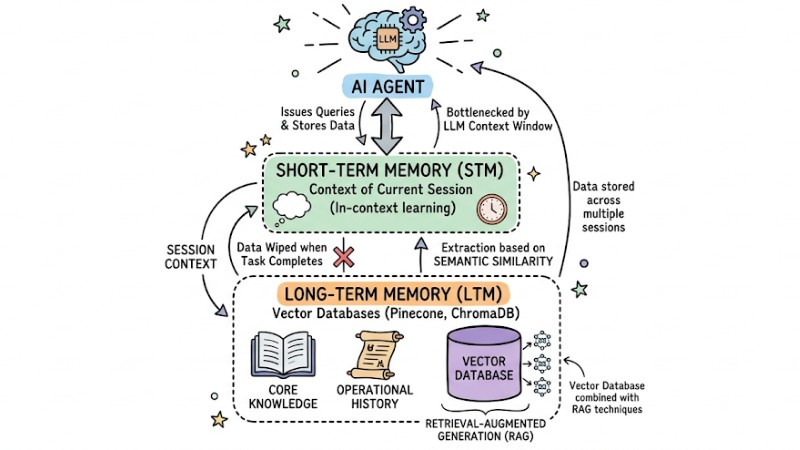

2. Memory (Memory Modules - STM & LTM)

To prevent the AI Agent from getting "amnesia" after every operation, the architecture requires specialized storage modules.

- Short-term Memory (STM): Stores the context of the current session (In-context learning). This data is wiped when the task completes and is bottlenecked by the LLM's context window.

- Long-term Memory (LTM): Stores core knowledge and operational history across multiple sessions. The system typically uses Vector Databases (like Pinecone, ChromaDB) combined with Retrieval-Augmented Generation (RAG) techniques to extract information based on semantic similarity.

Short-term Memory (Context) and Vector Database (Long-term Memory)

3. Planning & Task Decomposition

This capability helps the Agent solve complex problems. The system automatically decomposes tasks into smaller, manageable steps (e.g., 1. Parse request -> 2. Search web -> 3. Synthesize -> 4. Draft report). The most valuable aspect of planning ability lies in self-reflection and self-correction, enabling the AI to recognize mistakes in previous steps and try a different execution path.

4. Tools & APIs Calling

Tools/APIs provide the execution capability for the AI Agent to manipulate the environment. Via Function Calling mechanisms, the Agent framework hooks into tools, passes parameters, and receives returned payloads. Common tools include Web Search, Python REPL, and SQL data queries.

Battle-tested warning: When granting API permissions to an Agent (especially read/write database commands), you must run it in a Sandbox environment or enforce Human-in-the-loop approvals to prevent the system from autonomously wrecking your data.

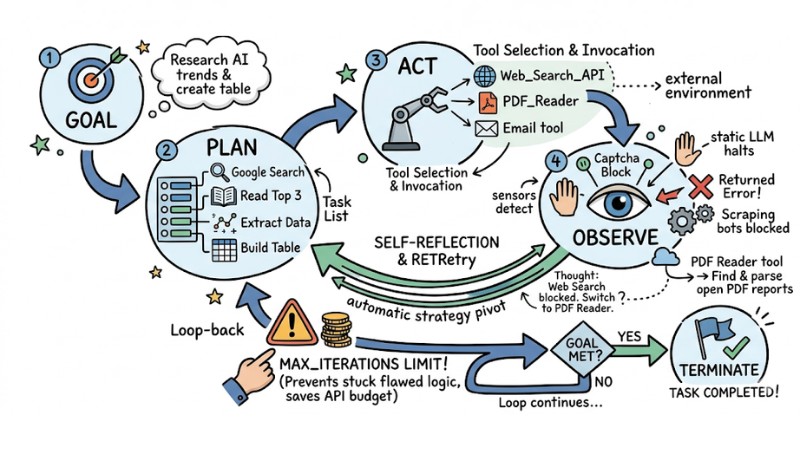

Agentic Workflow: The Operational Loop of AI Agent Architecture

To clearly understand the iterative workflow of an AI Agent, I will simulate a real-world scenario: Building a "Market Research Agent". The AI Agent's task execution process unfolds across 4 steps in a closed reasoning loop.

- Step 1: Intake Goal & Decompose (Goal & Plan) The user requests: "Research this year's AI trends and create a summary table". The Agent parses the prompt and generates a task list: Google search -> Read the top 3 articles -> Extract data -> Build the table.

- Step 2: Tool Selection & Action (Act) The Agent decides to invoke the Web_Search_API tool with the keyword "AI trends 2024".

- Step 3: Observation & Encountering Issues (Observe) The returned payload is an error because the website blocks scraping bots (Captcha). If this were a static LLM, the process would halt and throw an error.

- Step 4: Self-Reflection & Retry (Reflect & Loop) The Agent recognizes the failure and automatically pivots its strategy. It spawns a new thought process:

Thought: The Web Search tool is blocked. I cannot fetch data from standard news sites.

Action: Switch to using the PDF_Reader tool.

Action Input: Find and parse open-source PDF reports.

This process loops until the goal is met. A crucial battle-tested tip is to always set the max_iterations parameter (the maximum loop limit). This prevents the Agent from getting stuck in flawed logic, saving you from burning through your API budget in minutes.

The AI Agent Architecture Lifecycle

Top 3 Representative Reasoning and Planning Architecture Models

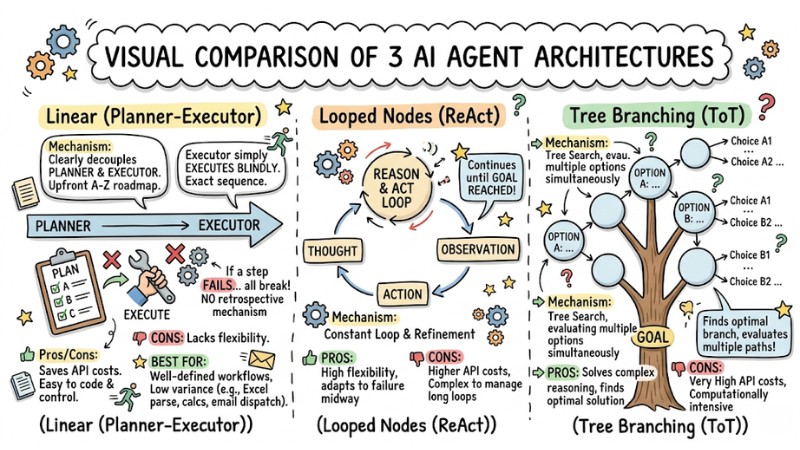

1. Planner-Executor Model (Linear)

- Mechanism: Clearly decouples two entities. The "Planner" maps out a detailed A-Z roadmap upfront. The "Executor" simply executes blindly in that exact sequence.

- Pros/Cons: Saves on API call costs, easy to code and control. However, it lacks flexibility. If step 2 fails, all subsequent steps will break because there is no retrospective mechanism.

- Best for: Tasks with well-defined workflows and low variance (e.g., parsing an Excel file, running internal calcs, then dispatching an email).

2. ReAct Model (Reasoning and Acting)

- Mechanism: Tightly couples thinking and acting in an alternating cycle: Thought -> Action -> Observation.

- Pros/Cons: This is currently the most popular Agentic design pattern. It is highly flexible and immediately detects bugs to patch them. The downside is it burns through more context tokens than the linear model.

- Best for: Virtual assistants interacting directly with external environments, like customer support agents or synthesized information retrieval bots.

3. Tree-of-Thought Model (ToT)

- Mechanism: Instead of linear thinking, the ToT architecture generates a tree structure. At each node, the AI spawns multiple branching thought paths, scores the viability of each branch, preserves the best ones, and prunes the bad ones.

- Pros/Cons: Unlocks multi-dimensional logical exploration, excellently solving highly complex problems. The trade-off is massive compute costs, heavy token consumption, and severe engineering complexity.

- Best for: Complex problems demanding strategic planning, mathematical solving, or writing tricky algorithmic code.

3 Prominent Reasoning and Planning Architectures

Multi-Agent Collaboration Architecture

Instead of cramming every skill into a single monolithic super Agent, the current trend is to build multi-agent systems to optimize collaboration. You spin up an AI squad with discrete roles, for example: a Coder Agent that writes code, a Reviewer Agent that hunts for bugs, and a Manager Agent that orchestrates.

Multi-agent collaboration applies the "divide and conquer" principle. Having Agents communicate, debate, and cross-examine each other aggressively mitigates the "hallucination" phenomenon native to LLMs, shipping a much more accurate and deep-dive output.

Top 5 Most Popular AI Agent Frameworks Today

From a battle-tested perspective, choosing the right framework dictates 50% of your project's success. Below are the top 5 LLM Framework platforms:

1. LangGraph (Evolved from LangChain)

- Detailed features: Operates on a directed state graph architecture. The biggest differentiator is its support for cycles (the AI can think and retry if it makes a mistake) instead of just running straight (linear). Built-in state persistence memory at every step.

- Detailed review: Extreme control capability: you know exactly which step the AI is at within the graph. Highly ideal for Production environments because it supports Human-in-the-loop (allowing human intervention to approve/edit midway) and robust fault tolerance. In return, the concepts of State and Node management are quite abstract for beginners.

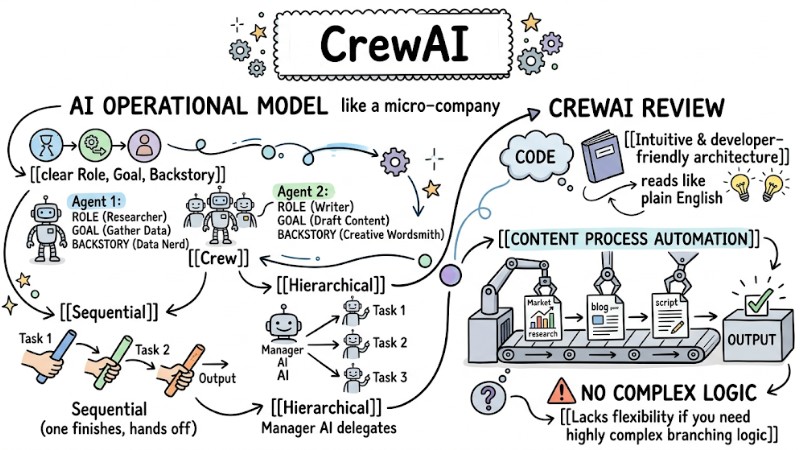

2. CrewAI

- Detailed features: Puts AI into an operational model like a micro-company. Each Agent is assigned a clear Role, Goal, and Backstory. Agents collaborate via Tasks using two main models: Sequential (one finishes, hands off to the next) or Hierarchical (one AI acts as a manager delegating work).

- Detailed review: Extremely intuitive and developer-friendly architecture; the code reads like plain English. Fantastic for content process automation projects (market research, blogging, scriptwriting). However, it lacks flexibility if you need highly complex branching logic.

CrewAI is best suited for content process automation projects

3. Microsoft AutoGen

- Detailed features: Conversation-driven architecture. Agents chat, debate, and cross-delegate tasks to solve problems. Its most powerful capability is that one Agent can write code, and another Agent acts as the execution environment to run that code immediately to find the result.

- Detailed review: The ultimate choice for problems related to programming, mathematics, or Data Science. Supports a Human-proxy mode (a human acts as an Agent in the squad). The downside is that you must absolutely set up an isolated environment (like a Docker container) to prevent the risk of AI executing destructive code on the system.

4. LlamaIndex

- Detailed features: At its core is Agentic RAG (RAG with reasoning). LlamaIndex provides Agents capable of automatic Query Routing – for example: asking about policies prompts the Agent to dive into a Vector DB to find a PDF, while asking about revenue prompts it to jump into a SQL database to run a query.

- Detailed review: The most powerful in the internal document processing space. It drastically mitigates the "hallucination" phenomenon by forcing the AI to reason strictly based on the organization's data repository. It isn't strong at making AIs chat with each other, but excels at parsing questions and hunting down deep-dive information.

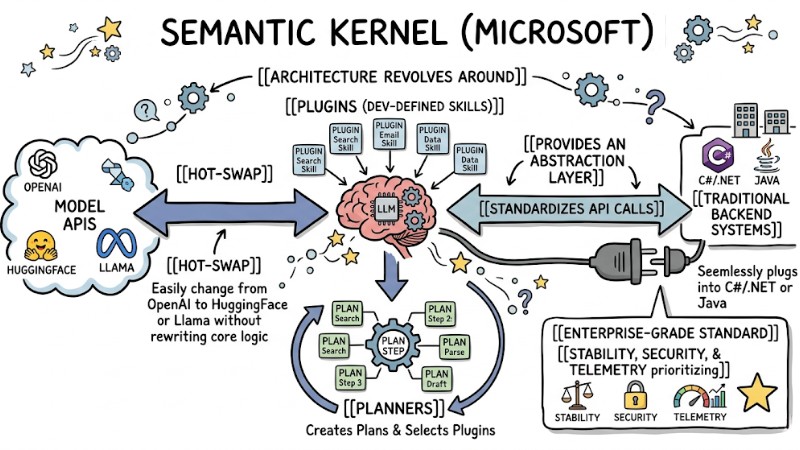

5. Semantic Kernel (Microsoft)

- Detailed features: Architecture revolves around Plugins (dev-defined skills) and Planners. It provides an abstraction layer that standardizes API calls. You can easily hot-swap from OpenAI's models to HuggingFace or Llama without rewriting the core logic.

- Detailed review: "Enterprise-grade" standard. It is designed to plug seamlessly into traditional backend systems using C#/.NET or Java. Stability, security, and telemetry are prioritized above all else.

Reliability, security, and observability are always the top priorities in Semantic Kernel

Guide to Selecting the Right AI Agent Architecture for Real-World Projects

System design requires balancing performance and cost. Use the matrix below to shape the task automation strategy for your project.

| Problem Complexity | Real-world Example | Recommended Architecture Model | Recommended Framework |

|---|---|---|---|

| Low (Linear, clear-cut) | Sending daily emails, parsing form data. | Planner-Executor | LangChain (Chains) |

| Medium (Requires interaction, lookups) | Customer support chatbots, RAG doc lookups, news summarization. | ReAct Single-Agent | LlamaIndex, LangGraph |

| High (Complex logic, creative) | Financial analysis, writing full-stack software, launching marketing campaigns. | Multi-Agent / Tree-of-Thought | CrewAI, AutoGen |

Battle-tested advice: Don't start with a Multi-agent setup right out of the gate. Build a Single-Agent using a basic ReAct model, ensure it flawlessly invokes exactly one tool (like Web Search), and gracefully handles error payloads before upgrading to more complex architectures. AI software engineering prioritizes stability over intelligence.

Frequently Asked Questions about AI Agent Architecture

Does building an AI Agent require beefy GPU servers?

Absolutely not. Unless you want to run open-source models (like Llama 3) directly on a local machine. The vast majority of current architectures utilize cloud APIs from OpenAI (GPT models), Anthropic, or Google. Your Agent's source code is very lightweight and can run smoothly on a basic server.

Why not Fine-tune the model instead of using an Agentic Workflow?

Fine-tuning only helps cram in more static knowledge and tweaks the model's tone of voice; it does not solve reasoning logic bottlenecks. For the AI to know "how to work", patch its own bugs, and fetch real-time data, you must absolutely use an Agentic Workflow coupled with tools.

What is an ACI (Agent-Computer Interface)?

If UI/UX is the interface for humans to interact with computers, the Agent-Computer Interface (ACI) is the communication standard for AI Agents to interact with our machines and software. It optimizes APIs, stripping away redundant payloads so the AI can "read" and "understand" the computer screen easier than humans.

How do you guarantee safety and control costs when deploying AI Agents?

AI ethics and safety must always be prioritized. Agents operating in a loop can autonomously spam API calls thousands of times if they hit an error, leading to a blown budget. You must always configure a loop limit (max_iterations), monitor your daily API burn rate, and isolate the code execution environment (Sandbox) to prevent malicious code from wrecking your system.

The emergence of AI Agent architecture is the dividing line between a generic text-generation tool and a truly autonomous software system. Mastering the 4 core components (Brain, Memory, Planning, Tools) and understanding the Agentic reasoning flow is a mandatory foundation for engineering highly reliable products.

Start your automation journey today. You can install the CrewAI or LangChain libraries, use a basic API key, and try setting up an Agent tasked with automated news synthesis. Those first lines of real-world code will help you grasp this architectural mindset with absolute clarity.

Tags