Tasks for Coding Agents: How to Assign Work Effectively so AI Codes Accurately

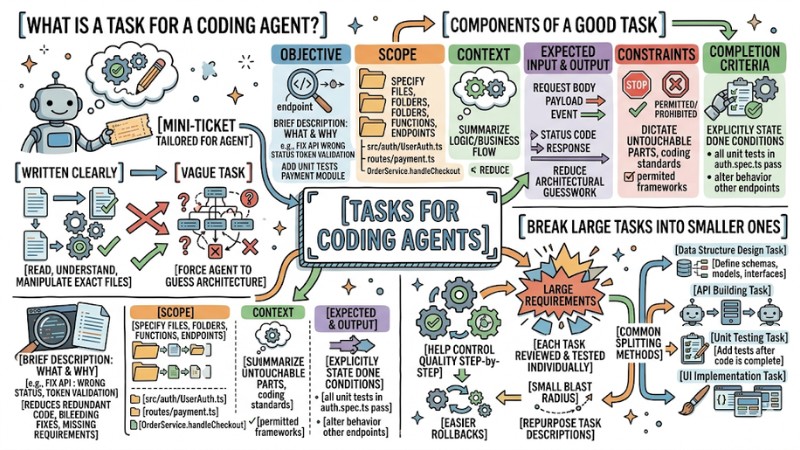

A task for a coding agent is a structured description detailing the objective, scope, context, constraints, and completion criteria for a programming job you want the AI to execute. This article guides you on how to write clear tasks, break down assignments, provide selective context, apply standard delegation workflows, and integrate Agent Skills when needed so the AI codes exactly to your requirements.

Key Takeaways

- Concept: Grasp the essence of an AI-specific job description, helping establish clear goals and boundaries to prevent the AI from guessing and breaking source code architecture.

- Component Structure: Identify the core elements shaping a standard requirement, creating a consistent delegation template and accurately guiding the AI's output.

- Task Fragmentation Principle: Understand the importance of breaking massive requirements down into independent units of work, making it easy to control module quality, optimize code reviews, and safely rollback if needed.

- The Role of Context: Understand the necessity of filtering info rather than cramming the entire project in, helping the AI process data quickly, accurately, and without noise.

- Standard Interaction Framework: Master the 4-stage closed-loop working model to maintain clear boundaries between human requirement-setting and machine execution support.

- The Value of Agent Skills: Recognize the "skill package" concept stored in SKILL.md files, helping synchronize how repetitive tasks (like writing tests or generating docs) are handled project-wide without having to rewrite descriptions from scratch.

- Frequently Asked Questions: Get answers regarding standard task length, limits on how many jobs to assign at once, and rules to prevent AI from arbitrarily touching unrelated source code zones.

What is a task for a coding agent?

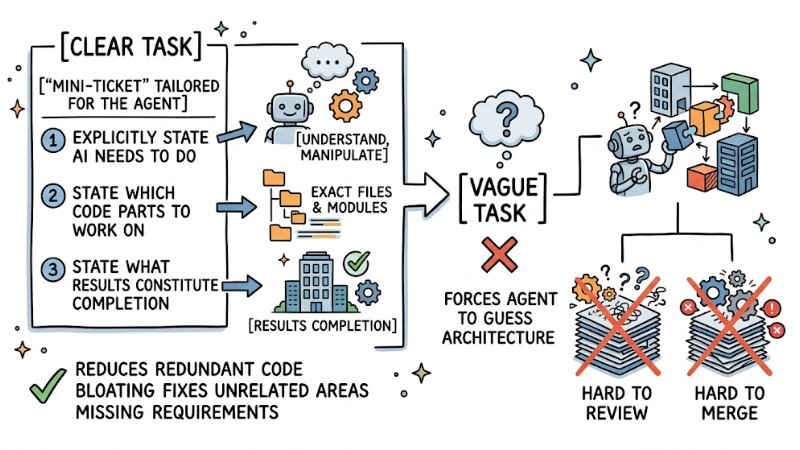

A task for a coding agent is a description of a specific programming job you want the AI to perform, packaged as a “mini-ticket” tailored for the agent. In this task, you must explicitly state what the AI needs to do, which code parts to work on, and what results constitute completion.

When a task is written clearly, the coding agent can read, understand, and manipulate the exact files and modules, reducing the risk of generating redundant code, bleeding fixes into unrelated areas, or missing critical requirements. Conversely, a vague task forces the agent to guess the architecture, leading to outputs that are hard to review and hard to merge into the codebase.

A Coding Agent task is a description of a specific programming job you want the AI to perform

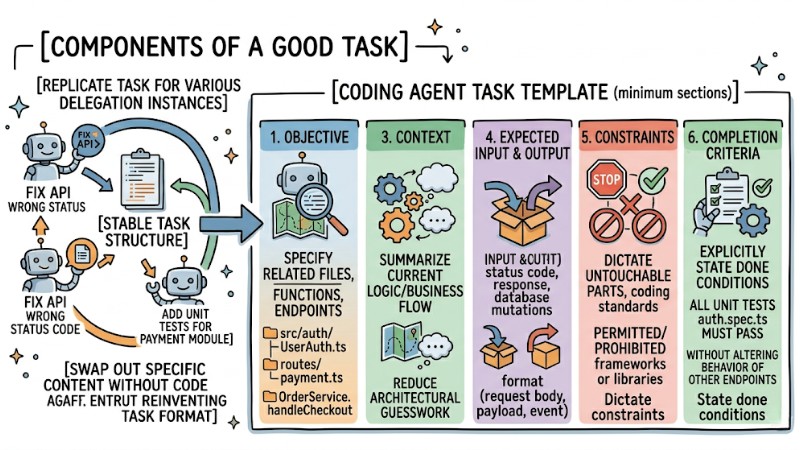

What components make a good task?

An effective task should have a stable structure so you can replicate it for various delegation instances. At a minimum, a coding agent task should include the following sections:

- Objective: Briefly describe the job to be done and the reason for doing it, for example, fixing an API returning the wrong status code during token validation, or adding unit tests for the payment module.

- Scope: Specify the related files, folders, functions, or endpoints, e.g.,

src/auth/UserAuth.ts,routes/payment.ts,OrderService.handleCheckout. - Context: Summarize the current logic or related business flow to reduce architectural guesswork.

- Expected Input and Output: Clearly state the input format (request body, payload, event) and output (status code, response, database mutations).

- Constraints: Dictate which parts are untouchable, coding standards, and which frameworks or libraries are permitted or prohibited.

- Completion Criteria: Explicitly state the conditions for the task to be considered done, e.g., all unit tests in

auth.spec.tsmust pass without altering the behavior of other endpoints.

With this structure, every time you assign work to the agent, you just swap out the specific content without having to reinvent the task format from scratch.

An effective task should have a consistent structure to allow for repeatable delegation

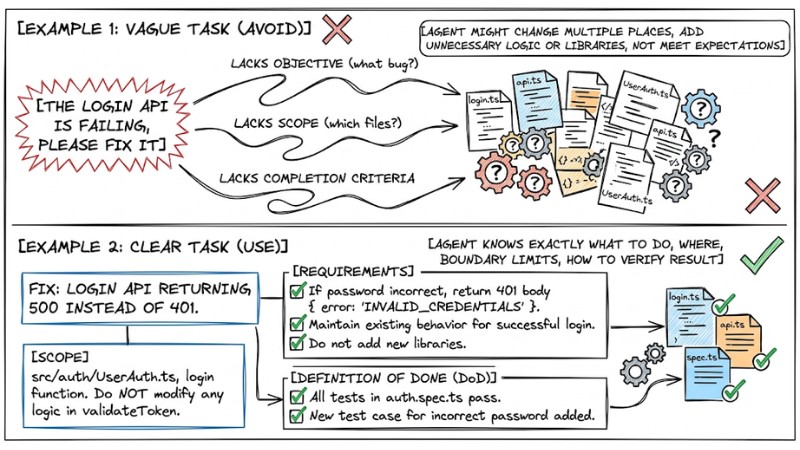

Examples of Clear vs. Vague Tasks

Example 1: Vague Task (Avoid):

The login API is failing, please fix it

This task lacks a specific objective (what bug?), lacks scope (which files?), and lacks completion criteria. The agent might change multiple places, add unnecessary logic or libraries, and still not meet your expectations.

Example 2: Clear Task (Use):

Fix: Login API returning 500 instead of 401.

Scope: src/auth/UserAuth.ts, login function. Do NOT modify any logic in validateToken.

Requirements:

- If the password is incorrect, return 401 with the body { error: 'INVALID_CREDENTIALS' }.

- Maintain existing behavior for successful login cases.

- Do not add any new libraries.

Definition of Done (DoD): All existing tests in auth.spec.ts must pass, and a new test case for incorrect password must be added.

In the second example, the agent knows exactly what to do, where to do it, the boundary limits, and how to verify the result.

Comparison Clear vs. Vague Tasks

How to break large tasks into smaller ones

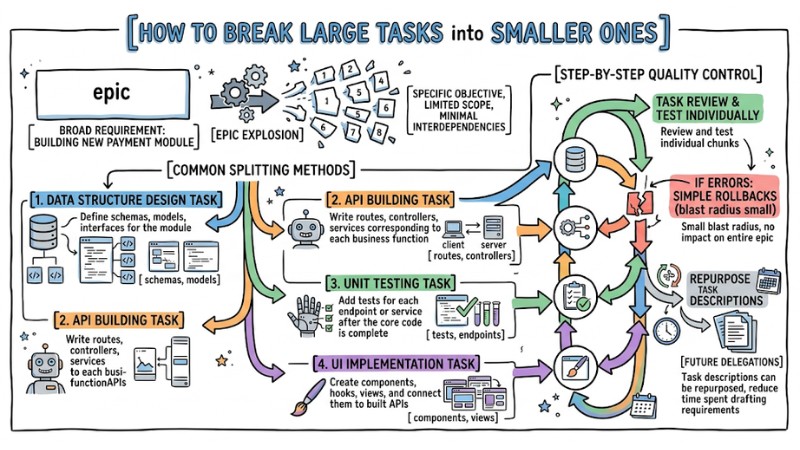

Coding agents operate much more effectively when each task has a specific objective, limited scope, and minimal interdependencies. For broad requirements like building a new payment module or overhauling an admin dashboard, it's best to split them into a chain of small tasks, with each handling a distinct chunk of work.

Common splitting methods include:

- Data Structure Design Task: Define schemas, models, and interfaces for the module.

- API Building Task: Write routes, controllers, and services corresponding to each business function.

- Unit Testing Task: Add tests for each endpoint or service after the core code is complete.

- UI Implementation Task: Create components, hooks, views, and connect them to the built APIs.

This breakdown helps control quality step-by-step, as each task can be reviewed and tested individually before moving to the next. If errors are spotted, rollbacks are simpler because the blast radius is small and doesn't impact the entire epic. Furthermore, task descriptions used for one module can be repurposed for others with similar structures, reducing the time spent drafting requirements for future delegations.

Breaking down large tasks into smaller sub-tasks for Coding Agents

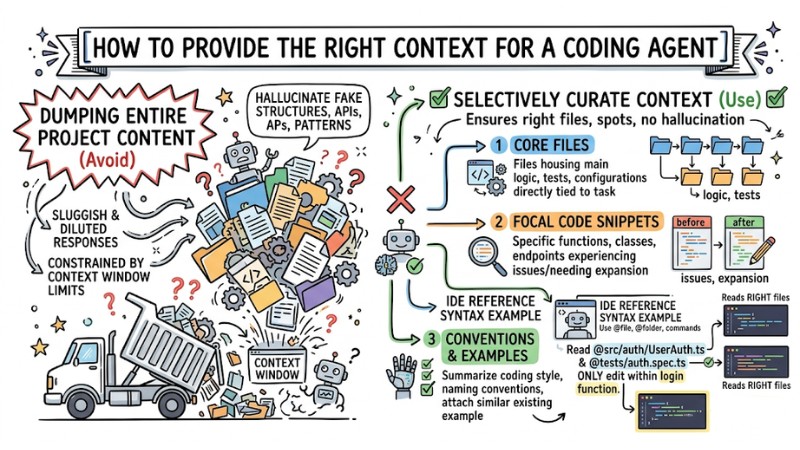

How to provide the right context for a coding agent

Since coding agents are always constrained by context window limits, dumping the entire project content into a prompt usually results in sluggish and diluted responses. Instead, selectively curate context across three main groups:

- Core Files: Files housing the main logic, tests, or configurations directly tied to the task.

- Focal Code Snippets: The specific functions, classes, or endpoints experiencing issues or needing expansion, extracted just enough to understand the before-and-after.

- Conventions and Examples: Summarize the coding style, naming conventions, and attach a similar existing example (endpoint, test, component) so the agent can mimic the structure.

In an IDE, use reference syntax like @file, @folder, or equivalent commands, for example:

"Read @src/auth/UserAuth.ts and @tests/auth.spec.ts, only edit within the login function."

This ensures the agent reads the right files, edits the right spots, and doesn't hallucinate fake structures, APIs, or patterns that don't exist in the codebase.

Providing the right context to Coding Agents for improved output quality

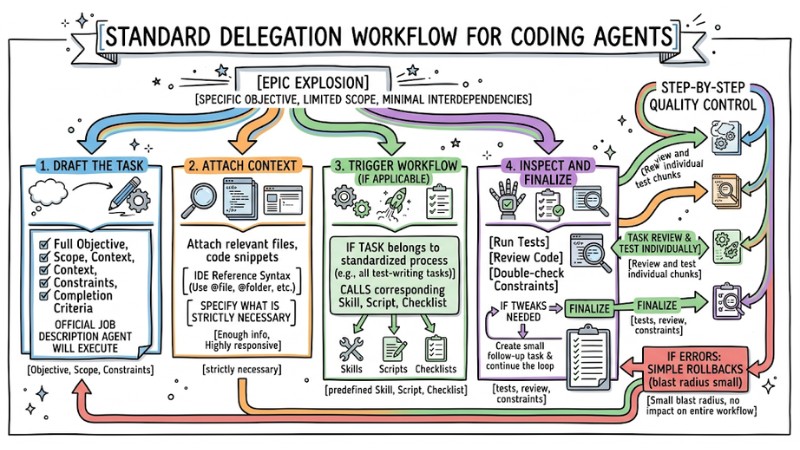

Standard delegation workflow for coding agents

You can apply a repeatable 4-step workflow every time you assign a task to a coding agent:

- Draft the task: Write the task with a full objective, scope, context, constraints, and completion criteria. This is the official job description the agent will execute.

- Attach context: Attach relevant files and code snippets using IDE reference syntax. Only specify what is strictly necessary so the agent has enough info while remaining highly responsive.

- Trigger workflow (if applicable): If this task belongs to a standardized process (e.g., all test-writing tasks follow the same flow), trigger the corresponding workflow by calling a predefined skill, script, or checklist.

- Inspect and finalize: After the agent returns the result, run tests, review the code, and double-check constraints. If tweaks are needed, create a small follow-up task and continue the loop.

This workflow maintains clear boundaries: the task is the specific job requirement, while workflows/skills simply assist the execution method.

Standard Task Delegation Workflow for Coding Agents

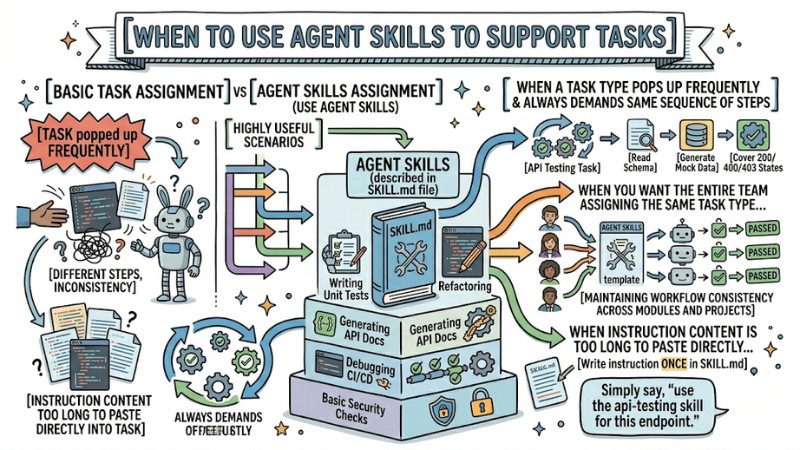

When to use Agent Skills to support tasks

Agent Skills are "skill packages" described in a SKILL.md file, used to standardize how agents handle repetitive task types like writing unit tests, refactoring, generating API docs, debugging CI/CD, or running basic security checks. You aren't required to have Agent Skills to assign tasks, but they are highly useful in the following scenarios:

- When a task type pops up frequently and always demands the same sequence of steps (e.g., every API testing task must read the schema, generate mock data, and cover 200/400/403 states).

- When you want the entire team assigning the same task type to the agent while maintaining workflow consistency across modules and projects.

- When instruction content is too long to paste directly into the task; instead, you write it in

SKILL.mdonce, and in the task simply say, "use theapi-testingskill for this endpoint."

Agent Skills are typically used to standardize how agents handle repetitive tasks

Frequently Asked Questions

How long should a task for a coding agent be?

The content should be just enough to describe the objective, scope, context, constraints, and completion criteria in a few short paragraphs, avoiding rambling into unrelated theoretical explanations. If you have to scroll heavily to finish reading, break it off into documents/skills or split it into separate tasks.

Should I assign multiple jobs in one task?

You shouldn't pack too many disparate objectives into one task, especially if they touch multiple modules or system layers. Ideally, each task should focus on one primary logical change (e.g., fixing 1 bug, adding 1 endpoint, writing tests for 1 module).

How do I stop the coding agent from altering unrelated parts?

Strictly limit the scope in the task (specific files, functions, endpoints) and explicitly state the "untouchable" sections. Once the agent proposes a patch, always run the full test suite and review diffs to ensure no rogue edits occurred outside the permitted zone.

When should I split a task into multiple steps?

When a requirement touches multiple layers (database, API, UI) or involves fixing logic while simultaneously writing tests, refactoring, and documenting, it should be split into a chain of small tasks. Each step should have a clear output (e.g., schema done, API done, tests done) making it easy to review and rollback.

Do I need to attach files and tests to the task?

Yes, especially the main logic files and related test files, so the agent reads the correct current context. Specifying the test files that need to pass or be expanded also helps the agent understand the completion criteria and limits the risk of breaking currently correct behaviors.

Designing tasks for coding agents with clear objectives, limited scopes, selective context, and specific completion criteria forms the foundation for stable and easily reviewable AI programming. When you combine this delegation method with standardized workflows or Agent Skills, you can boost accuracy, slash revision cycles, and truly leverage the AI as a reliable programming assistant.