AI Agent Orchestration: 6 Optimal System Design Patterns

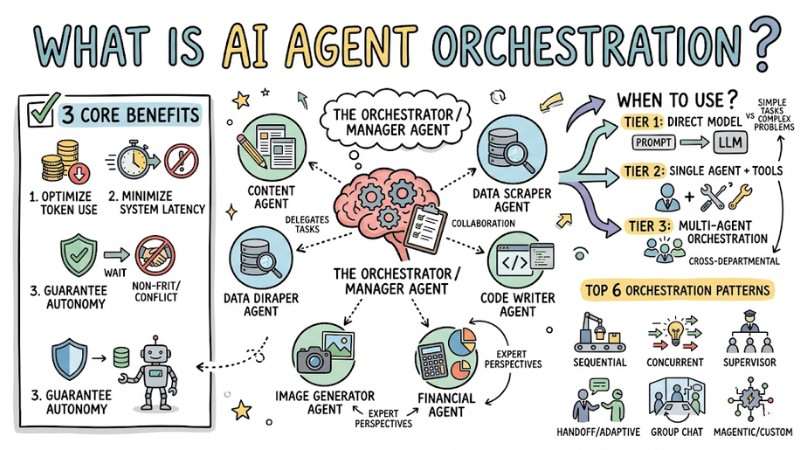

AI Agent Orchestration is the system for routing, monitoring, and controlling how multiple AI Agents communicate with each other to solve a complex problem. Similar to a conductor leading a symphony orchestra, this system ensures each Agent knows exactly when to speak up, when to wait, and who to hand off results to. For AI engineers and tech managers, selecting the right Multi-agent Systems (MAS) architecture directly dictates two survival factors of Enterprise AI Infrastructure: optimizing operational costs and minimizing system latency.

Key Takeaways

- Concept and importance: Understand what an AI orchestration system is and how it helps enterprises optimize token costs, reduce latency, and boost Agent autonomy.

- When to use it: Master the complexity escalation principle to pick the right method, from direct model calls to Multi-agent systems, dodging resource waste.

- 6 Design Patterns: Grasp the characteristics, pros, and cons of the 6 popular orchestration models, helping you pick the optimal architecture for specific real-world problems.

- Selection criteria: Know how to evaluate and trade off between budget, speed, and control level to pick the most suitable model for your enterprise infrastructure.

- Best Practices: Learn key techniques like context management, system observability, and security to ship AI systems from the lab to production safely and efficiently.

- FAQ: Grasp battle-tested questions on routing, operational KPIs, and how to apply models for specific domains like Voice AI or Human-in-the-loop.

What is AI Agent Orchestration and Why is it important?

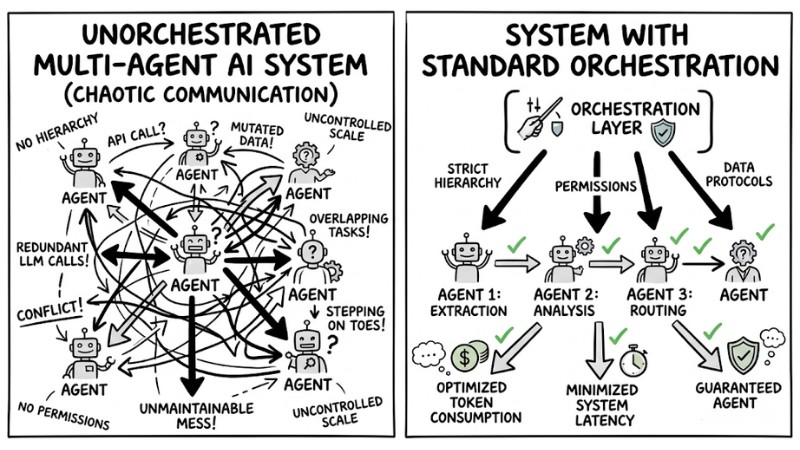

AI Agent Orchestration is the workflow coordination infrastructure that strictly defines the hierarchy, permissions, and data exchange protocols between multiple specialized Agents. When developing AI apps, many engineering squads often skip the orchestration design phase upfront. Consequently, as the system scales, Agents start stepping on each other's toes, making overlapping API calls, and mutating the entire architecture into an unmaintainable mess.

The difference between an unorchestrated Multi-agent AI system and a system with standard Orchestration

Applying methodical Multi-agent coordination yields 3 core benefits for enterprises:

- Optimize Token consumption: The system only triggers the exact Agent needed for each specific task, completely eliminating redundant Large Language Model (LLM) calls.

- Minimize System latency: Smart routing models help slash wait times between response steps, perfectly satisfying the demands of real-time systems.

- Guarantee Agent autonomy: Clearly demarcating operational boundaries helps each Agent maintain peak autonomy without triggering conflicts with other Agents in the network.

When Should You Use Multi-Agent Orchestration?

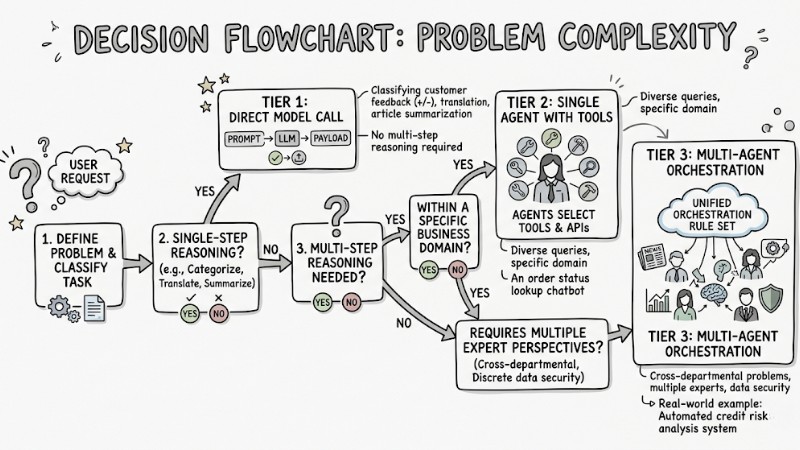

The most common bug when adopting AI is "Over-engineering" – using a sledgehammer to crack a nut. Cramming multiple Agents into a simple task only spikes costs and latency. To dodge this waste, I recommend applying the complexity escalation principle across these 3 tiers:

Direct model call

- How it works: Firing a prompt straight to the LLM and receiving the payload.

- When to use it: Packaged tasks that don't require multi-step reasoning.

- Real-world example: Classifying customer feedback into Positive/Negative, text translation, article summarization.

Single agent with tools

- How it works: A single monolithic Agent autonomously reasons and selects tools (APIs, Databases) to close out the job.

- When to use it: Diverse queries that remain strictly within a specific business domain.

- Real-world example: An order status lookup chatbot hooked into an internal database.

Multi-agent orchestration

- How it works: Multiple expert Agents working together under a unified orchestration rule set.

- When to use it: Cross-departmental problems demanding multiple expert perspectives or featuring discrete data security barriers.

- Real-world example: An automated credit risk analysis system (An Agent scraping news, an Agent parsing financial reports, an Agent synthesizing the final decision).

Deciding whether to use Multi-agent or Single-agent based on problem complexity

Top 6 Most Popular AI Agent Orchestration Patterns

Every problem demands a different Agent arrangement. Below are the 6 most common orchestration architectures on the market today.

1. Sequential Orchestration

This model operates like a manufacturing assembly line (Linear Pipeline). The output of Agent A becomes the input of Agent B, chaining together in a static sequence.

- Mechanism: Agents are chained together via explicit Linear dependencies.

- Pros: Absolute stability. Extremely easy to debug because you know exactly where the data payload flows. Saves tokens since there is no circular debating.

- Cons: Compounding latency. If the first Agent processes slowly, the entire downstream system is blocked waiting, and it cannot handle problems requiring backtracking.

- Best for: Content automation pipelines (Example: Outlining Agent -> Writing Agent -> Spell-check Agent).

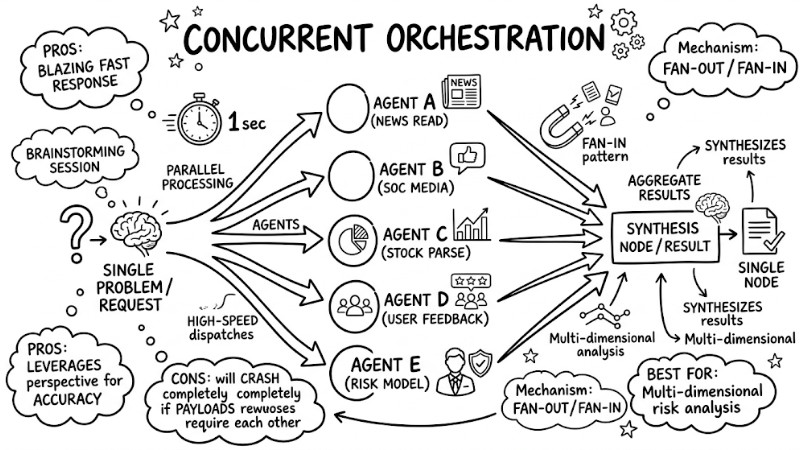

2. Concurrent Orchestration

If Sequential is an assembly line, Concurrent is a high-speed Brainstorming session. Here, the system dispatches the same problem to multiple Agents for parallel processing, then synthesizes the results.

- Mechanism: Applies the Fan-out/Fan-in pattern (splitting into parallel processing flows, then aggregating the results into a single node).

- Pros: Blazing fast response speeds. Leverages multiple independent perspectives simultaneously to spike accuracy.

- Cons: Will crash completely if the Agents require each other's data payloads to function.

- Best for: Multi-dimensional risk analysis systems (Bot A reads the news, Bot B scrapes social media, Bot C parses stock charts within the exact same second).

Fan-out/Fan-in architecture splitting tasks in parallel within Concurrent Orchestration

3. Supervisor Pattern

This is an architecture mocking a traditional corporate structure. An Agent acting as the Director (Orchestrator) intakes the request, slices the task, and delegates the workload to staff Agents.

- Mechanism: Hierarchical RBAC (Role-Based Access Control). The Orchestrator retains ultimate control over task delegation.

- Pros: Guarantees high transparency. You can easily audit which Agent is bugging out to tweak it.

- Cons: Bottleneck risks. If the Supervisor gets overloaded or routes to the wrong Agent, the entire workflow pipeline breaks.

- Best for: Hooking AI into enterprise ERP systems, where you need tight control over every command execution step.

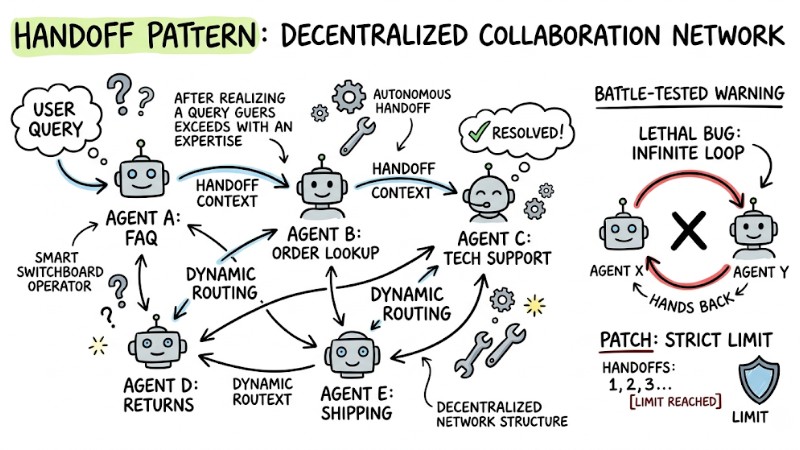

4. Handoff / Adaptive Orchestration

This model acts like a smart switchboard operator. Upon realizing a query exceeds its domain expertise, the responding Agent autonomously hands it off to a more suitable Agent.

- Mechanism: This model utilizes Dynamic routing, directly handing off context between Agents within a decentralized network structure.

- Battle-tested warning: The most lethal bug in this architecture is the infinite loop, where Agent A pushes to B, and B pushes back to A. To patch this, I always hardcode a strict limit on the maximum number of handoffs.

- Best for: CS switchboard systems (General FAQ Bot -> Order Lookup Bot -> Tech Support Bot).

Decentralized collaboration network in the Handoff Pattern

5. Group Chat Orchestration

This model pulls all the Agents into a "virtual meeting room". A Chat Manager dictates who has the floor next to collaboratively resolve an open-ended problem.

- Mechanism: Utilizes Maker-checker loops. Here, Agent A pitches an idea, Agent B critiques it, looping until consensus is reached.

- Cost analysis: This is the fastest "cash-burning" model. The reason is the entire chat history continuously bloats and is re-injected into the LLM after every turn, causing Token consumption to spike exponentially. Therefore, use it only when absolutely necessary.

- Best for: Planning complex software architectures, writing creative movie scripts.

6. Magentic / Custom Pattern

This model is for problem spaces that no off-the-shelf template can satisfy. This architecture requires developers to dive deep into the codebase to engineer custom logic.

- Mechanism: The model utilizes Agent-led plan documentation, and Devs use Agent SDKs (like LangChain, AutoGen) to manually route the flow.

- Pros: Absolute customization, capable of crunching the most complex enterprise pipelines.

- Cons: R&D development CapEx is insanely expensive, demanding a squad of elite AI engineers.

- Best for: Highly specialized Core Banking systems or architecting NPC behaviors in Game AI.

# Ví dụ cấu trúc thiết lập Custom logic cơ bản bằng LangChain Graph

from langgraph.graph import StateGraph, END

workflow = StateGraph(AgentState)

workflow.add_node("planner", plan_step)

workflow.add_node("executor", execute_step)

workflow.add_edge("planner", "executor")

workflow.add_conditional_edges("executor", check_status, {"continue": "planner", "done": END})

Criteria for Selecting the Right Orchestration Pattern

Designing AI systems is always a tradeoff game because no single model is cheap, blazing fast, and absolutely brilliant all at once. You need to rely on the comparison table below to make your decision:

| Model Name | Token Budget | Speed | Control Level |

|---|---|---|---|

| Sequential | Low | Slow | High (Linear) |

| Concurrent | Medium | Blazing fast | Medium |

| Supervisor | High | Slow | Absolute |

| Handoff | Medium | Fast | Low (Free-flowing) |

| Group Chat | Very high | Very slow | Low (Debate-dependent) |

| Custom Pattern | Customizable | Customizable | Manual hard-coding |

Best Practices When Architecting Multi-agent Systems

Regardless of which model you pick, to ship the system from the lab to production, you must strictly adhere to these principles:

- Optimize the Context Window by pre-summarizing payloads: Instead of piping the entire chat history from one Agent to another, demand the upstream Agent to cleanly summarize the payload before handoff to prevent token bloat and avoid triggering LLM hallucinations.

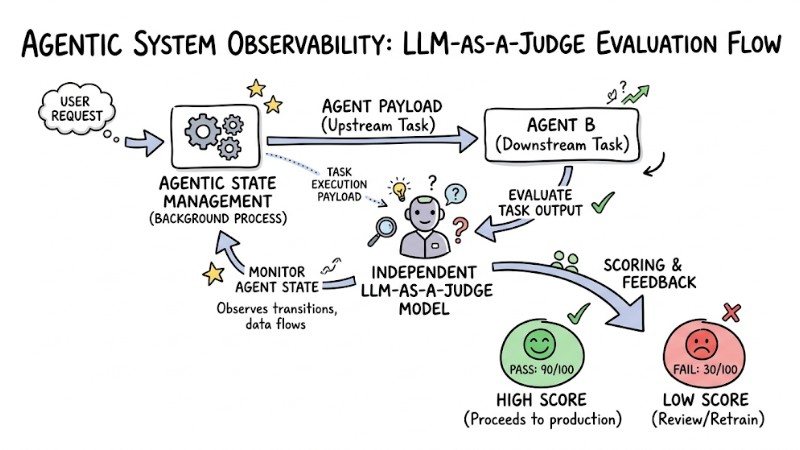

- Ensure AI System Observability: Avoid letting the system turn into a Black-box; spin up an independent "LLM-as-a-judge" model strictly to score and monitor the Agentic state management running in the background.

- Enforce least privilege in security: Do not grant full database access to all Agents. For example: A news-scraping Agent is only granted web-reading tools, while a data-deletion Agent can only be triggered upon human approval.

LLM-as-a-judge evaluation flow monitoring and scoring Agent tasks

Frequently Asked Questions (FAQ)

How do Deterministic and Dynamic routing differ in Orchestration?

Deterministic routing is like running a train on existing tracks; the Agent sequence is hard-coded from the start (like Sequential). Dynamic routing is like driving in a city; Agents self-evaluate the real-time state to decide who gets handed the next step (like Handoff).

Which KPIs should you monitor when operating Multi-agent Systems?

You need to track 3 main metrics:

- Cost per Task (Total token burn per session).

- End-to-End Latency (The delay from the user prompt to the final output).

- Handoff Failure Rate (The percentage of times an Agent handoff throws an error or hits a dead end).

Which model should you use for Voice AI apps requiring low latency?

For Voice AI apps, absolutely dodge the Supervisor and Group Chat models because their reasoning wait times are way too long. You should utilize the Adaptive / Handoff Pattern or Concurrent Orchestration to guarantee response times stay sub-second, keeping the voice conversation flow natural.

Is Human-in-the-loop (HITL) necessary in Multi-agent setups?

Human-in-the-loop is absolutely mandatory in high-risk tasks. No matter how brilliantly the Agent squad orchestrates, the final decision regarding financial transactions, legal actions, or core data mutations strictly requires an approval gate with human intervention (HITL).

What is AI Agent Orchestration?

AI Agent Orchestration is the process of coordinating, managing, and wiring multiple AI agents to operate together to complete complex tasks. It's like a conductor leading an orchestra, ensuring the agents collaborate seamlessly.

Why is AI Agent Orchestration critical for enterprises?

Orchestration helps optimize costs, slash system latency, and spike scalability for complex AI apps, mutating them from a "chaotic mess" into highly efficient enterprise AI infrastructure.

When should you use Multi-Agent Orchestration instead of directly calling an LLM?

You need Multi-Agent Orchestration when the problem space demands decomposition into multiple processing steps, requires collaboration between specialized agents, or when a single monolithic agent lacks the bandwidth to process the request effectively.

What are the most popular orchestration patterns?

The most popular patterns include Sequential, Concurrent, Supervisor, Handoff/Adaptive, Group Chat, and Magentic/Custom.

How do Sequential Orchestration and Handoff Orchestration differ?

Sequential Orchestration is a linear pipeline following a static flow (A -> B -> C), while Handoff Orchestration permits agents to freely and dynamically transfer tasks to one another based on context, without a pre-baked sequence.

What is the advantage of Concurrent Orchestration?

Concurrent Orchestration allows multiple agents to work in parallel on the exact same task. This spikes processing speeds and leverages multiple distinct perspectives to output better decisions or analysis.

Are there any optimized patterns for Voice AI systems?

Yes, patterns like Handoff/Adaptive Orchestration or Concurrent Orchestration are typically better suited for Voice AI because they prioritize low latency, driving smooth user experiences and rapid responses.

What are the criteria for selecting the right orchestration pattern?

Selecting a pattern depends on your budget (cost), speed (latency) requirements, the required control level, and the complexity of the problem space. There is no absolute perfect pattern, only the most fitting pattern for your enterprise's architecture and goals.

Read more:

- Shared Memory Multi-Agent: The Most Effective AI Architecture Optimization

- Multi-Agent Architecture: 4 Core Models and Practical Applications

- AI Agent Architecture: A Comprehensive Developer's Guide

Architecting AI Agent Orchestration isn't about hunting down the most perfect model; it's about picking the solution that best fits your enterprise's resources and technical constraints. If you're hitting bottlenecks routing your current Agent system or need to optimize massive API burn rates, drop a comment or reach out directly so our engineering squad can assist you with deep-dive solutions!

Tags