OpenClaw vs LangChain: Choosing the AI Agent Framework for Enterprise

OpenClaw vs LangChain is a critical choice when designing enterprise AI Agent architecture, as each platform handles a different role between the logic layer and the operational layer. This article analyzes OpenClaw vs LangChain across architecture, design philosophy, development models, costs, and governance to help you choose or combine these two tools for your specific infrastructure and business goals.

Key Takeaways

- Reasons for Comparison: Understand the purpose of analyzing OpenClaw and LangChain, helping organizations correctly identify the role of each platform in processing reasoning logic and coordinating operational infrastructure.

- Layered Thinking Framework: Grasp how to design systems that separate the Intelligence layer from the Execution layer, optimizing the practical application of AI Agents.

- LangChain Characteristics: Explore the code-centric platform specialized in helping developers build large language application flows, integrate RAG, and control detailed logic using Python or TypeScript.

- OpenClaw Characteristics: Identify the configuration-centric platform specialized in maintaining continuous system operation, multi-channel message routing, and direct connection to business applications.

- Selection Criteria: Master the criteria for deciding when to use LangChain, when to choose OpenClaw, and methods for combining both.

- Risk and Cost Assessment: Understand the importance of setting monitoring rules, evaluating the scalability of each platform, and estimating the total cost of ownership before deployment.

- FAQ: Get answers to questions about the substitutability between the two platforms, the concept of the Model Context Protocol, and how to integrate both OpenClaw and LangChain simultaneously in a project.

Why compare OpenClaw vs LangChain?

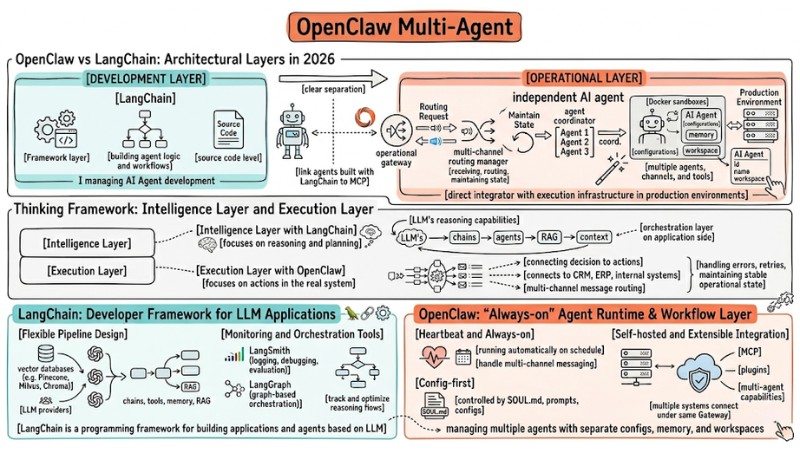

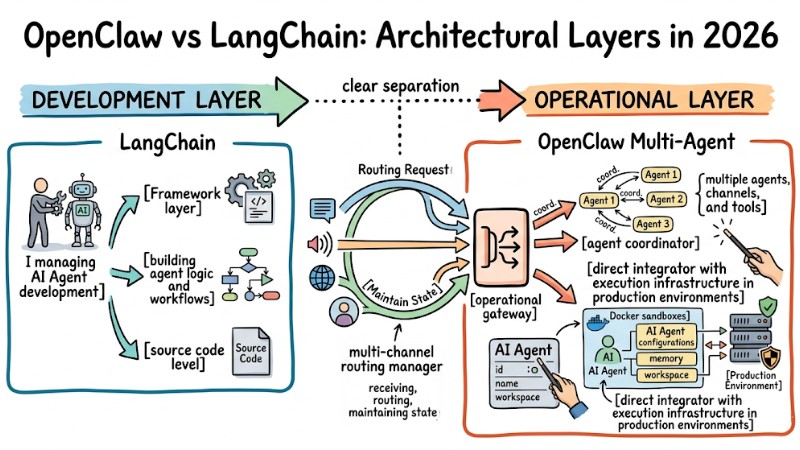

OpenClaw and LangChain are both vital platforms for AI Agent development in 2026, but they address different layers in the system architecture. LangChain primarily focuses on building agent logic and workflows at the source code level, while OpenClaw Multi-Agent assumes the role of an operational gateway, agent coordinator, multi-channel routing manager, and direct integrator with execution infrastructure in production environments.

In the context of bringing AI Agents into the enterprise, the choice to combine or prioritize between LangChain and OpenClaw directly impacts deployment speed, scalability, and system durability. LangChain continues to hold its position as the de-facto standard at the framework layer, while OpenClaw is used as the practical operational layer for multiple agents, channels, and tools, helping to clearly separate the development layer from the operational layer.

OpenClaw and LangChain are crucial platforms for AI agent development

Thinking Framework: Intelligence Layer and Execution Layer

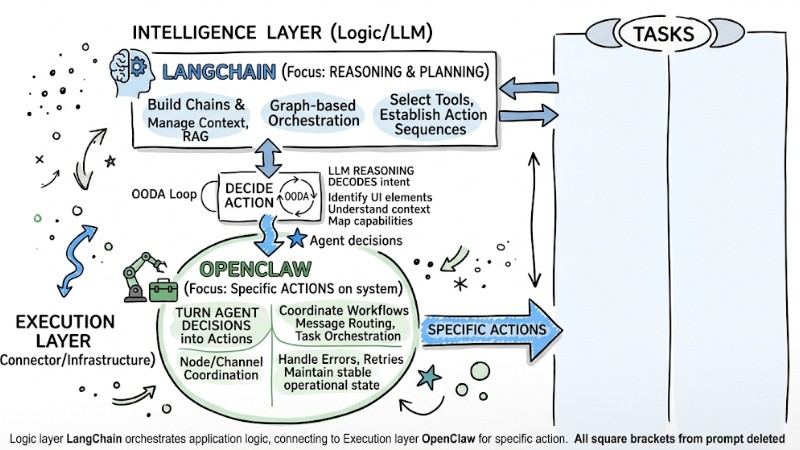

In modern AI Agent architecture, one should clearly separate the intelligence layer (focusing on reasoning and planning) and the execution layer (focusing on actions in the real system). This layering helps in choosing the right tool for each task, rather than trying to use a single framework for the entire chain from reasoning to infrastructure integration.

- Intelligence Layer with LangChain: This layer focuses on the LLM's reasoning capabilities, building chains and agents, managing context, RAG, and graph-based orchestration to select tools and establish action sequences. LangChain acts as the orchestration layer on the application side, where logic handles requests, splits steps, routes subtasks, and combines multiple models or retrievers.

- Execution Layer with OpenClaw: This layer focuses on turning agent decisions into specific actions on the system, via a Gateway that coordinates workflows, message routing, and task orchestration between nodes and channels. OpenClaw connects to CRM, ERP, internal systems, and communication channels, handling errors, retries, and maintaining stable operational state at the infrastructure layer for agents built with LangChain or similar tools.

| Criteria | LangChain | OpenClaw |

|---|---|---|

| Main Purpose | Develop smart AI applications | Automate business processes |

| Focus | Reasoning capabilities | Execution capabilities |

| Approach | Code-first (Requires programming) | Config-first (Configuration) |

| Stability | Depends on code logic flow | High (Always-on/Heartbeat mechanism) |

The architecture layer with LangChain and the execution layer with OpenClaw

LangChain: Developer Framework for LLM Applications

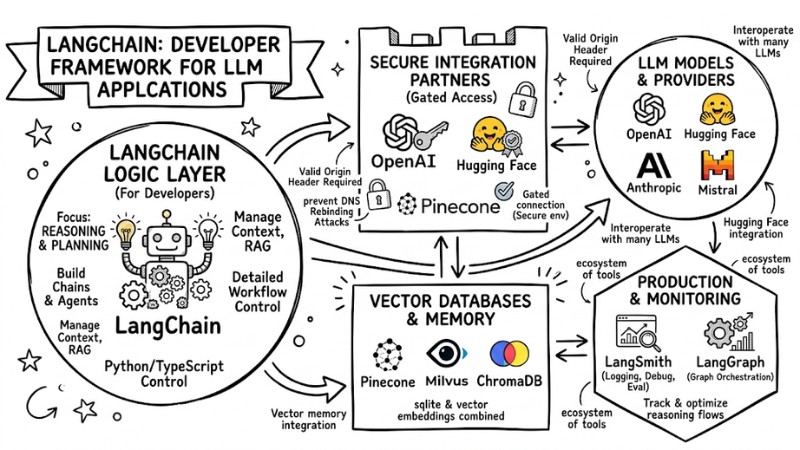

LangChain is a programming framework for building LLM-based applications and agents, providing building blocks like chains, tools, memory, and RAG integration to create complex workflows with detailed control at the Python or TypeScript code level. LangChain stands out for its ecosystem of tools supporting both development and operation of LLM applications in production environments.

- Flexible Pipeline Design: Supports multiple pipeline types from RAG and single tools to multi-agent workflows, while integrating with vector databases like Pinecone, Milvus, Chroma, and many other LLM providers.

- Monitoring and Orchestration Tools: Provides LangSmith for logging, debugging, and quality evaluation, along with LangGraph for graph-based orchestration to track and optimize reasoning flows in production.

LangChain is a programming framework for building applications and agents based on LLM

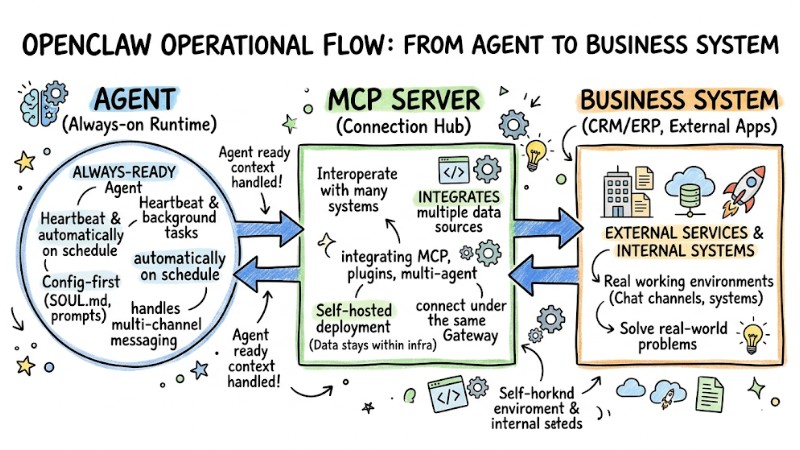

OpenClaw: "Always-on" Agent Runtime & Workflow Layer

OpenClaw is an agent runtime platform designed to run continuously in the background, keeping agents ready and directly connected to real working environments like chat channels, internal systems, and external services. This tool is geared toward operations teams, businesses, and non-programmers, allowing agent behavior to be configured primarily via configuration files, prompts, and files like SOUL.md rather than writing extensive source code.

- Heartbeat and Always-on: Supports heartbeats and background tasks so agents run automatically on schedule, handling multi-channel messaging without needing manual startup every time.

- Config-first: Controls agent behavior via configuration files, prompts, and SOUL.md, shortening the time from design to operation in real-world environments.

- Self-hosted and Extensible Integration: Supports self-hosted deployment so data stays within your infrastructure, integrating MCP, plugins, and multi-agent capabilities, allowing multiple data sources and systems to connect under the same Gateway.

Example Configuration (YAML):

agent_name: "CRM_Updater"

tasks:

- trigger: "new_lead_email"

action: "update_crm_fields"

mcp_server: "salesforce_connector"

OpenClaw is an agent runtime platform designed to run continuously in the background

Detailed Comparison: OpenClaw vs LangChain

The table below summarizes the key differences between OpenClaw and LangChain from the perspective of product, development model, deployment, and operation in the enterprise:

| Criteria | LangChain | OpenClaw |

|---|---|---|

| Product Type | Programming framework to build LLM applications and agents within backend source code or services. | Agent platform or runtime to run agents, workflows, and routing in a dedicated Gateway or agent server. |

| Approach | Code-first, prioritizes writing Python or TypeScript using SDKs, graphs, and primitives like models, prompts, retrievers, tools. | Config-first, prioritizes agent configuration via config files, prompts, tasks, and workflow runtime instead of building all logic at the application code level. |

| Target Audience | Developer teams, SaaS builders, and development groups needing deep AI logic customization within a product. | Operations teams, IT, DevOps, and enterprises needing to deploy always-running, multi-channel agents that are easy to manage in real-world environments. |

| Intended Use | Build LLM applications, RAG, multi-agent workflows, and reasoning logic within web apps, microservices, or backend logic. | Operate multi-channel AI workers, message routing, scheduling, task orchestration, and direct connection to chat channels, MCP, and internal systems. |

| Setup Time | Usually requires hours to days to build logic, integration, logging, and deployment depending on complexity. | Can be initialized faster thanks to the runtime model and pre-configurations, suitable for scenarios needing short time-to-value for operational agents. |

| Multi-channel Capability | Not available as a built-in; teams must manually integrate each channel like Slack, Discord, or Telegram into the application. | Strong support at the Gateway layer with multi-channel connectivity and agent routing by channel, account, or peer. |

| Self-host, Privacy, Audit | Can be self-hosted, but privacy, audit, and observability depend on how the team designs their own infrastructure and monitoring stack. | Supports self-hosting, keeps data within enterprise infrastructure, and provides a runtime layer better suited for business logging, channel logs, and audit trails by agent or message. |

Suitable Use Cases for Each Platform

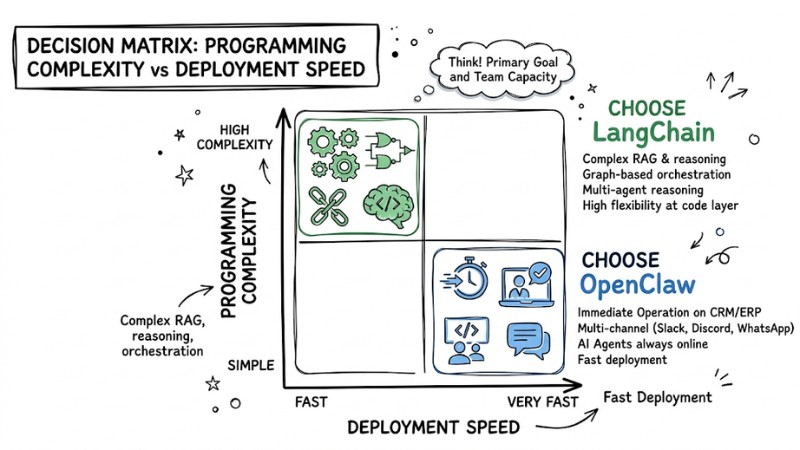

The choice between LangChain and OpenClaw depends on the project's primary goal and the technical capacity of the team.

Choose LangChain when:

- Building a SaaS or a new AI application from scratch, needing to embed LLM logic directly into the backend or an existing service.

- Requiring complex RAG, multi-step reasoning, graph-based orchestration, or multi-agent reasoning at the code layer.

- The team has experienced Python or TypeScript programmers who want detailed control over logic, integration, and infrastructure architecture.

Choose OpenClaw when:

- Needing AI Agents to operate immediately on systems like CRM, ERP, ticketing, or internal processes without wanting to build an entire new application.

- Prioritizing fast deployment, multi-channel (Slack, Discord, WhatsApp) with agents always online and less dependency on dev teams to maintain the runtime.

- The team wants to manage agents via chat channels and configuration instead of investing significant resources in developing and operating separate agent infrastructure.

Cases to use both:

- Use LangChain or LangGraph to build the “logic service” handling reasoning, RAG, and decision-making at the application layer.

- Package that logic as a tool or MCP server and let OpenClaw act as the execution layer and chat entry point, responsible for routing, scheduling, and connecting with enterprise systems.

Suitable use cases for each platform

Important Factors to Consider

When deciding to use OpenClaw, LangChain, or a combination of both, enterprises should simultaneously consider governance, scalability, and total cost of ownership:

- AI Governance: Both approaches require a clear governance framework for approving agent actions such as permissions, review processes, and audit logs to avoid incidents in business systems.

- Scalability: LangChain is suitable for scaling AI logic and features at the application layer, while OpenClaw is suitable for scaling the number of operational tasks, channels, and background agents within the same infrastructure.

- TCO (Total Cost of Ownership): With LangChain, costs typically focus on technical personnel, building and maintaining infrastructure, logging, and observability for complex workflows. With OpenClaw, the config-first model can reduce development time and token overhead but, in return, requires managing a separate server or runtime and agent runtime infrastructure operating costs.

FAQ

Can LangChain replace OpenClaw?

No, LangChain focuses on building logic and workflows at the application layer, while OpenClaw provides an always-active runtime, multi-channel support, logging, and sustainable operational mechanisms for agents in enterprise environments.

What is MCP (Model Context Protocol)?

MCP is a standard protocol that helps AI Agents connect to external data and tools via a common manifest and schema layer, reducing the need to write separate wrappers for each system and supporting better security control.

Can I use both OpenClaw and LangChain at the same time?

Yes, a popular model is using LangChain to handle reasoning, RAG, and decisions at the logic layer, then exposing it as a service or MCP server for OpenClaw to call and execute specific actions on CRM, ERP, or internal systems with full logging and orchestration.

Read more:

- Guide to Installing OpenClaw and Simple Configuration for Beginners

- Guide to Integrating OpenClaw MCP for Advanced AI Agent Optimization

- OpenClaw Use Case: Effective Work Automation Methods

OpenClaw vs LangChain is not a mutually exclusive choice but a matter of layering. LangChain is suited for reasoning and workflow at the framework layer, while OpenClaw is suited as an always-on runtime layer, multi-channel routing hub, and integrator with enterprise systems. When evaluated according to governance, scalability, and TCO factors, many businesses will achieve the highest efficiency by using LangChain to build the logic “brain” and using OpenClaw to operate AI workers in real-world environments in a stable, controlled, and easy-to-monitor manner.