What is AIaaS? A detailed guide to Artificial Intelligence as a Service

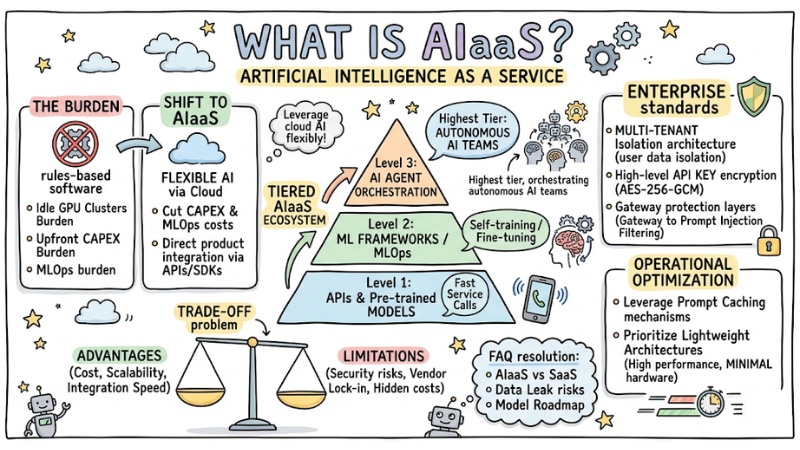

As the cost of maintaining idle GPU clusters and operating MLOps increasingly becomes a burden, businesses are shifting to flexible AI infrastructure models without needing upfront CAPEX investments. AIaaS therefore emerges as a practical choice: Providing AI capabilities via the Cloud, allowing direct product integration through APIs, while addressing the “build vs. buy” dilemma and the security risks of putting LLMs into production. In this article, I will explore with you the core benefits and most common classifications of AIaaS.

Key Points

- The nature of AIaaS: Understand that AIaaS is the distribution of AI inference capabilities via APIs/SDKs instead of traditional rules-based software, helping businesses cut infrastructure (CAPEX) and MLOps costs.

- Tiered ecosystem: Differentiate 3 levels: APIs/Pre-trained Models (fast service calls), ML Frameworks/MLOps (self-training/fine-tuning), and AI Agent Orchestration (the highest tier: orchestrating autonomous AI teams).

- The trade-off problem: Recognize the advantages in cost, scalability, and integration speed; alongside limitations regarding security risks, vendor lock-in, and hidden costs.

- Enterprise standards: An enterprise-grade AIaaS system must feature Multi-tenant isolation architecture (user data isolation), high-level API Key encryption (AES-256-GCM), and protection layers from the Gateway to Prompt Injection filtering.

- Operational optimization: Leverage Prompt Caching mechanisms and prioritize lightweight architectures to ensure high performance with minimal hardware resources.

- FAQ resolution: Understand the differences between AIaaS and traditional SaaS, how to manage data leak risks through multi-tenant architecture, and the roadmap for choosing AI models to optimize costs.

What is AIaaS?

AIaaS (Artificial Intelligence as a Service) is a model for distributing artificial intelligence services via cloud platforms. It allows developers to integrate inference capabilities directly into applications via API or SDK without having to build internal server infrastructure themselves.

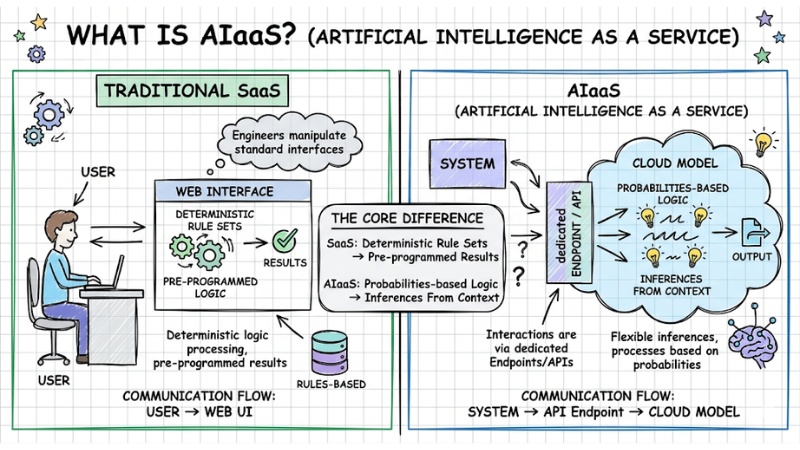

The core difference between traditional SaaS and AIaaS lies in the nature of logic processing. While traditional software operates based on deterministic rule sets, AI services process based on probabilities.

In Cloud Computing infrastructure, an AIaaS system doesn't return pre-programmed results but inferences flexibly from the input data context. Therefore, engineers interact with AIaaS systems primarily through dedicated Endpoints and APIs, rather than manipulating standard Web UI interfaces.

AIaaS is a model for distributing artificial intelligence services via cloud platforms

Quick comparison table: AIaaS vs SaaS

| Criteria | SaaS (Software as a Service) | AIaaS (AI as a Service) |

|---|---|---|

| Interaction target | End-users. | Software Engineers, Data Scientists. |

| Output architecture | Deterministic. | Non-deterministic/Probabilistic. |

| Communication protocol | Web UI, Mobile App. | REST API, gRPC, SDKs. |

| Infrastructure management | Provider handles everything. | Based on specialized infrastructure (IaaS). |

Classifying the modern AIaaS ecosystem

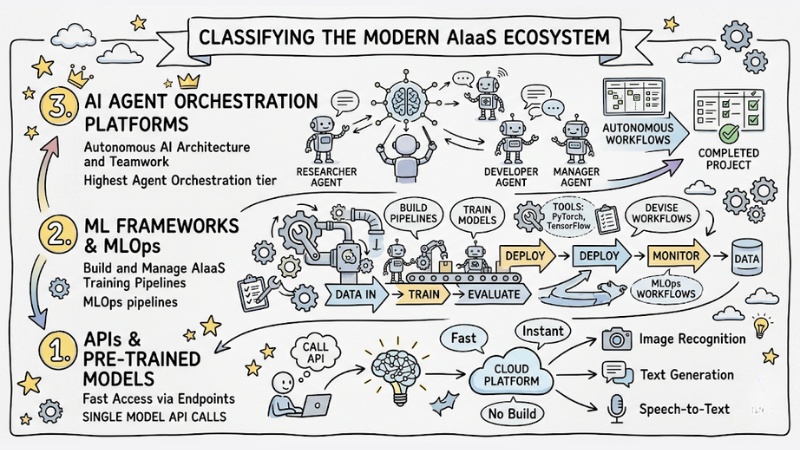

The enterprise AIaaS ecosystem is divided into 3 main tiers:

- Tier 1: APIs and Pre-trained Models (Fast access via Endpoints).

- Tier 2: Machine Learning Frameworks & MLOps (Build and manage AIaaS training pipelines).

- Tier 3: AI Agent Orchestration Platforms (Autonomous AI architecture and teamwork).

Classifying the modern AIaaS ecosystem

Tier 1: APIs and Pre-trained Models

This is the layer consumed directly through API integration. Developers simply send a request containing data and receive results from pre-trained models.

This AIaaS layer is ideal for image recognition features, natural language processing (NLP), or developing a basic Generative AI application. This doesn't require deep Data Science knowledge, and is extremely suitable for Web Developers who want to equip AI features quickly.

Tier 2: Machine Learning Frameworks and MLOps

This tier provides a computing environment for businesses to self-train or fine-tune their own models. AIaaS Machine Learning frameworks provided as Cloud services help engineers control the entire model lifecycle.

While offering high customizability, this tier requires teams to set up complex data pipelines, from raw cleaning steps to automated data labeling before pushing into the training flow.

Tier 3: AI Agent Orchestration Platforms

This is the furthest advancement of AIaaS for Enterprise AI, shifting from calling single chatbot APIs to managing teams of autonomously operating AIs. This tier allows configuring Agents to work in teams, automatically delegating tasks, querying Vector databases, and calling external tools. Complex workloads are broken down and delegated synchronously among agents helping to fully resolve business processes instead of just answering questions.

Advantages and limitations of AIaaS

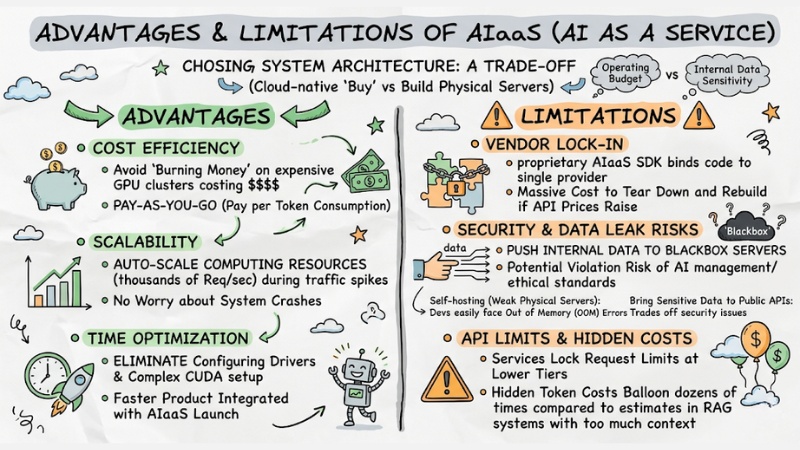

The process of choosing system architecture is always a trade-off. Specifically, choosing a Cloud-native delivery (Buy) method versus setting up physical servers yourself (Build) directly depends on the operating budget and the sensitivity of internal data.

Advantages

- Cost efficiency: Businesses avoid "burning money" on GPU clusters costing tens of thousands of dollars. You only pay according to the actual token consumption (Pay-as-you-go).

- Scalability: Automatically scale computing resources up to thousands of requests/second during traffic spikes without worrying about system crashes.

- Time optimization: Completely eliminates the step of configuring NVIDIA drivers or complex CUDA setups, helping products integrated with AIaaS features launch faster.

Limitations

However, relying on third-party platforms brings critical weaknesses that System Architects need to understand:

- Vendor lock-in: Using proprietary AIaaS SDK functions tightly binds the source code to a single provider. If they raise API prices, the cost to tear down and rebuild will be massive.

- Security and data leak risks: Pushing internal data up to Blackbox servers carries the potential risk of violating current AI management and ethical standards. If self-hosting on weak physical servers, devs easily face Out of Memory (OOM) errors, but bringing sensitive data to Public APIs trades off with security issues.

- API limits and hidden costs: Most services lock request limits at lower tiers. Furthermore, when applying RAG systems within AIaaS cramming too much context, hidden token costs will balloon dozens of times compared to estimates.

Advantages and limitations of AIaaS

Standards for choosing an enterprise AIaaS platform

To resolve the security and performance dilemma, an Enterprise-grade AIaaS platform needs to meet extremely strict architectural criteria. Taking the open-source platform GoClaw as an example, which is highly rated by the engineering community, a standard Reference Architecture for AIaaS must thoroughly resolve both speed and data isolation.

In terms of security, the system must apply Multi-tenant isolation architecture. Each workspace needs a completely separate context. API keys must be encrypted at the highest level like AES-256-GCM to prevent information theft. The protective barrier needs to be broken down into 5 layers: From Gateway authentication, anti-Prompt Injection, up to limiting access rights according to each specific Channel.

Frequently asked questions about AIaaS

Regarding operational optimization, Core components written in system languages like Golang will produce super lightweight executables. For instance, GoClaw for AIaaS possesses a binary of ~25MB, consumes ~35MB RAM, boots in under 1 second, and can run smoothly right on a $5 VPS. Moreover, to minimize hidden costs in AIaaS, the platform needs to support Prompt Caching mechanisms to cut up to 90% of input token costs when processing long-term context memory.

What is AIaaS (Artificial Intelligence as a Service)?

AIaaS is a model that provides artificial intelligence tools and capabilities through cloud platforms. Users can integrate AI into systems via APIs or SDKs without investing in GPU infrastructure or developing models from scratch.

What is the difference between AIaaS and traditional SaaS?

SaaS typically provides software with deterministic functions (rule-based). Conversely, AIaaS provides probabilistic data processing capabilities like language or computer vision models, allowing flexible integration into custom applications via AIaaS APIs.

What are the main risks when deploying AIaaS for enterprises?

The biggest risks when using AIaaS include:

- Becoming completely dependent on the provider's ecosystem.

- Risks of leaking sensitive data onto the cloud.

- Token fees spiking as usage scales.

Why should businesses prioritize Multi-tenant architecture when using AIaaS?

Multi-tenant architecture in AIaaS allows complete separation of workspaces, contexts, and API keys between users. This ensures data safety, avoids cross-information leaks, and meets strict enterprise security standards.

How can you reduce costs when using AIaaS services?

To reduce costs when using AIaaS services, you should:

- Prompt Caching: Leverage prompt caches to reduce up to 90% of token costs.

- Choose appropriate models: Use smaller models for simple tasks.

- Optimize infrastructure: Use lightweight platforms to reduce server operating costs.

What is AI Agent Orchestration in AIaaS and why is it important? AI Agent Orchestration in AIaaS is the ability to coordinate multiple AI Agents to work together as a team, allowing for work delegation and coordination to execute complex processes. It helps automate entire workflows instead of just stopping at single chatbot responses.

See more:

- 10 AI Agent Cost Optimization Strategies for Budget Management

- Self-hosted vs SaaS AI Agents: Which option is right for your business?

- Measuring AI Agent ROI: A roadmap from implementation to real-world value

In the digital transformation era, AIaaS is not just a simple API service but has become a mandatory infrastructure lever. The shift towards Orchestration Agent architecture makes software systems smarter, but at the same time also places a major responsibility on businesses regarding data governance and vendor lock-in risks.