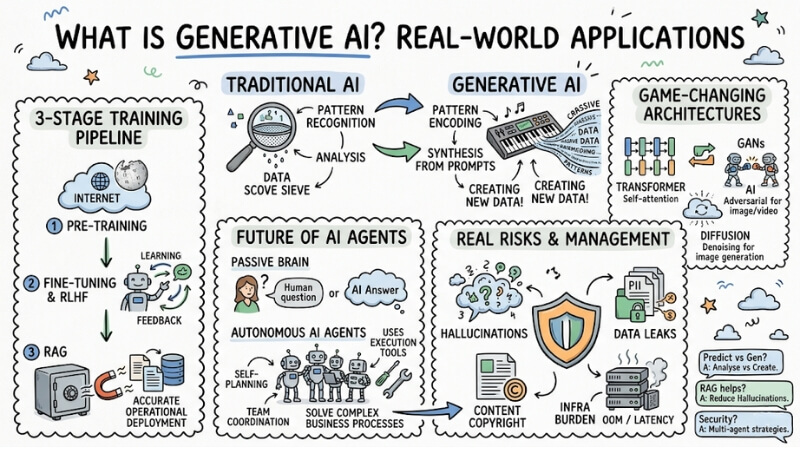

What Is Generative AI? Real-World Applications of Generative AI

Generative AI is a branch of artificial intelligence focused on creating entirely new data based on patterns learned from massive datasets. Instead of just analyzing existing data, Generative AI synthesizes elements to produce original results based on user prompts. In this article, I will break down the underlying workflow, core architectures, and the real risks of this technology when applied to real-world environments.

Key Points

- The nature of Generative AI: Understand the shift from Pattern Recognition (Traditional AI) to Pattern Encoding (Generative AI), which enables computers to synthesize and create original data.

- 3-stage training pipeline: Master the roadmap from Pre-training (building foundational knowledge from the Internet), to Fine-tuning & RLHF (behavioral refinement), and RAG (retrieving real-world data) to deploy AI into enterprise operations accurately.

- Game-changing architectures: Understand the mechanisms of Transformer (parallel processing via Self-attention), GANs (adversarial networks for image/video generation), and Diffusion Models (denoising for image generation)—the foundations powering modern models.

- The future of Agentic AI: Redefine the role of GenAI: moving from a "passive brain" (only answering when asked) to autonomous AI Agents (self-planning, team coordination, using execution tools) to solve complex business processes.

- Practical risk management: Face the vulnerabilities head-on: Hallucinations, sensitive data leaks (PII), content copyright, and infrastructure burden (OOM/Latency) when scaling up.

- FAQ resolution: Clarify the difference between predictive AI and generative AI, the importance of RAG in reducing hallucinations, and system security strategies when deploying AI Agents in enterprises.

What is Generative AI? Core differences from traditional AI

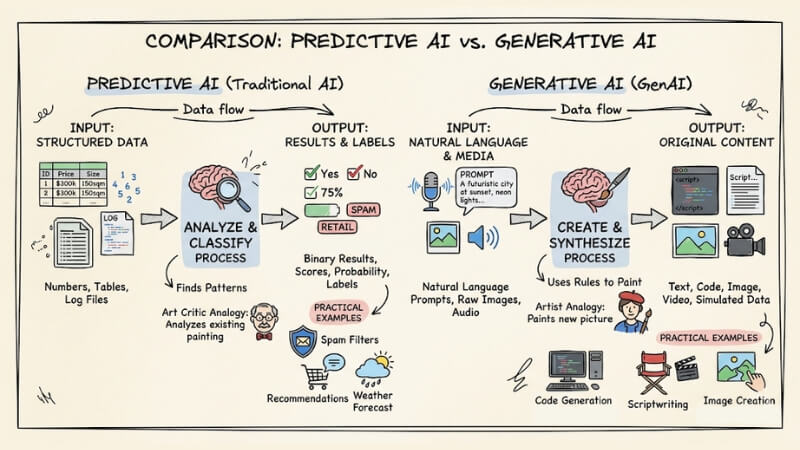

To understand the nature of Generative AI, we need to look back at how AI has operated over the past decade. Traditional Machine Learning models (Predictive AI) were built on the principle of Pattern Recognition. They were trained to find patterns in data and then provide labels or probability predictions. For example: predicting house prices or classifying spam emails.

Conversely, Generative AI marks a shift toward Pattern Encoding. The system doesn't just stop at recognition; it uses those rules to create entirely new Synthetic Data. If traditional AI analyzes data like an art critic analyzing a painting, Generative AI creates new content like an artist painting a picture.

Furthermore, while traditional AI requires strictly structured input data (tables, parameters), GenAI operates through a natural language interface, allowing communication in everyday language.

Here is a comparison table between Generative AI and traditional AI to give you an overview:

| Criteria | Traditional AI | Generative AI |

|---|---|---|

| Core purpose | Analyze, classify, and predict probabilities. | Create, synthesize, and simulate data. |

| Input data | Structured data (numbers, tables, log files). | Natural language (Prompts), raw images, audio. |

| Output data | Binary results, scores, or labels. | Original content: Text, Code, Image, Video. |

| Practical example | Spam filters, product recommendations, weather forecasting. | Code Generation (Copilot), scriptwriting, image creation (Midjourney). |

Comparison of Generative AI and traditional AI

Despite its power, GenAI cannot replace traditional AI. For problems requiring linear calculations, credit scoring, or financial fraud detection, Predictive AI remains dominant due to its absolute precision.

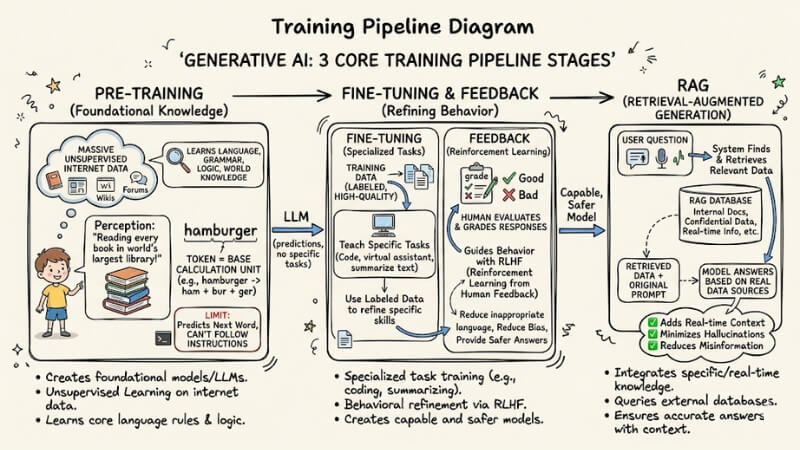

Breaking down how Generative AI works: 3 core stages

How can a computer model write Python code or compose poetry? The secret lies in a massive training pipeline.

Technical term to know: Token is the base calculation unit of text. A long word might be split into several tokens (e.g., "hamburger" = "ham" + "bur" + "ger"). AI models don't read letters the way humans do; they predict the next token based on mathematical probability.

The standard training process for a Generative AI model includes 3 main stages:

Pre-training

This is the stage where foundational models or LLMs are created. This process applies unsupervised learning on a massive amount of data from the Internet. Imagine this method like a child entering the world's largest library and reading every book.

The model learns how language works, grammar, basic logic, and how the world operates. However, at this stage, the model can only predict the next word; it cannot answer questions or follow instructions.

Fine-tuning and Feedback

The foundational model needs specialized training.

- Fine-tuning: Uses Training Data (labeled, high-quality data) to teach AI how to perform specific tasks: how to code, how to act as a virtual assistant, or how to summarize text.

- RLHF mechanism (Reinforcement Learning from Human Feedback): Humans evaluate and grade AI's responses to guide behavior, helping the model reduce inappropriate language, avoid bias, and provide safer answers.

RAG (Retrieval-Augmented Generation)

A typical LLM only knows what it learned at the time of training, so it cannot automatically access confidential data, internal documents, or new information generated within your business.

RAG techniques have become industry standard. When you ask a question, the system finds and retrieves relevant data, then feeds that information alongside your prompt to force the model to answer based on actual data sources. Thanks to this, RAG adds real-time context and minimizes AI hallucinations or misinformation.

Breaking down how Generative AI works: 3 core stages

3 "Game-changing" model architectures of GenAI

To create powerful Deep Learning Models, hardware (GPUs) is not enough. Breakthroughs in new architectural algorithms are what opened this era.

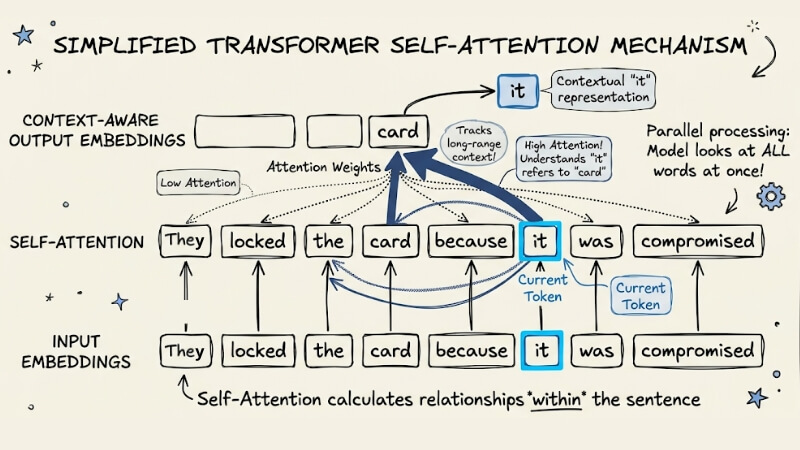

Transformer Architecture

Introduced by Google in 2017 via the scientific paper "Attention Is All You Need," the Transformer has become the core foundation for all current language models (such as GPT, Claude, Llama).

Instead of processing text sequentially word by word, the Transformer architecture allows models to process the entire input sequence in parallel, with a focus on the Self-attention mechanism, helping the model understand the relationship between words in a sentence more effectively.

Imagine a long sentence: "The bank locked the card because it was compromised." The Self-attention mechanism helps the system understand "it" refers to "card" rather than "bank" by assigning linkage weights between keywords. This ability to track context over long distances broke the Vanishing gradient barrier of previous AI generations.

3 "Game-changing" model architectures of GenAI

Generative Adversarial Networks (GANs)

GANs are excellent architectures for creating synthetic images and videos. They consist of two Neural Networks working adversarially:

- Generator: Attempts to create fake data (e.g., human face images) to look as realistic as possible.

- Discriminator: Tasked with distinguishing what is real data from the training set versus what is fake data created by the Generator.

These two networks constantly "battle" and learn from each other. The Generator gets better at drawing, while the Discriminator gets sharper at spotting fakes. This process stops when the fake image is so realistic that the "Police" can no longer distinguish it.

Diffusion Models

If you have ever used Stable Diffusion or Midjourney, you are actually using Diffusion models. This architecture operates based on two main steps: adding noise and denoising.

The system takes an original image and "destroys" it by continuously adding noise to pixels until only a static, meaningless screen of noise remains. Then, the model learns to reverse the process: Starting from a screen of pure noise, it gradually denoises to create a brand new, complete image, guided by the text command (prompt) you enter.

Applications of Generative AI technology

- Multi-modal ecosystem: AI doesn't just process text. New models can recognize audio directly, analyze charts from image files, or generate instructional videos from a text script.

- Accelerating software development cycles: Generate boilerplate code, convert source code between languages (e.g., from Java to Golang), and write automated unit tests.

- Data automation: Extract information from thousands of unstructured PDF invoices and parse them into accurate JSON formats.

The flip side of Generative AI technology

The application of Generative AI into enterprise operational flows is undeniable. However, along with the benefits come serious system security risks.

- AI Hallucinations: This is the biggest system error. AI can provide incorrect financial data or generate non-existent code libraries. This is exactly why you must have a Human-in-the-loop (human moderation) mechanism when applying it.

- Data leakage: Pushing internal source code or customer data (PII) to public APIs (unencrypted) means you are providing enterprise data to train models for the provider.

- Copyright: Legal risks arise when AI output contains code or images copyrighted from training data sources (e.g., lawsuits related to The New York Times or GitHub Copilot).

The inevitable evolution: From Generative AI to Agentic AI

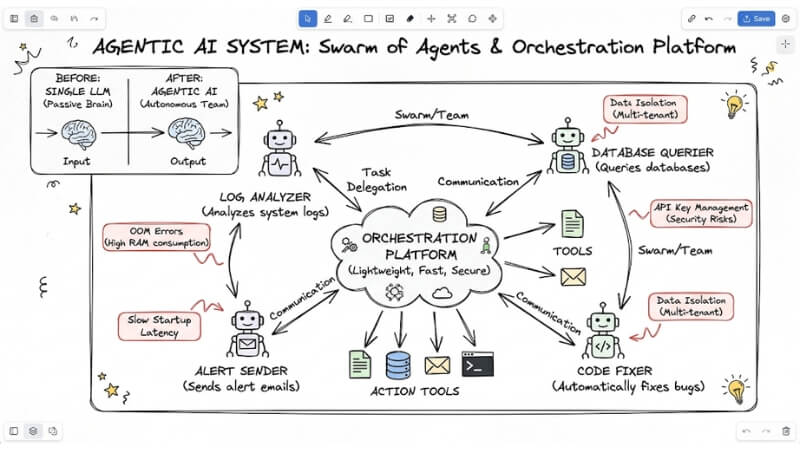

A single large language model (LLM), no matter how smart, only acts as a passive "brain": you ask, it answers. To execute complex business processes, like automatically analyzing system logs, querying databases, sending alert emails, and automatically fixing code bugs, that brain needs action tools. That is the birth of AI Agents.

The Agentic AI system is where multiple AI Agents work in teams (Swarm/Team), automatically planning, delegating tasks to each other, and using tools without constant human intervention.

However, when DevOps Engineers deploy a large-scale army of Agents, painful technical problems immediately appear:

- Each Agent operating independently consumes a large amount of RAM, potentially leading to Out Of Memory (OOM) errors on the server.

- Slow startup speeds increase the latency of the entire system.

- Managing dozens of API Keys between Agents easily leads to serious security risks.

- Difficulty in isolating data between user flows (multi-tenant).

To solve these, enterprises need an orchestration platform for Agentic AI that is lightweight, fast, and secure enough to operate a whole "army" of agents at scale safely.

AI Agent team communicating with each other via an Orchestration system

Frequently Asked Questions about Generative AI

What is Generative AI and what are its main differences?

Generative AI is a type of artificial intelligence capable of creating new content such as text, images, and source code from learned data. Unlike traditional AI, which only predicts or classifies data, Generative AI focuses on simulating patterns to create entirely new data.

What is the difference between traditional AI and Generative AI?

Traditional AI (Predictive AI) focuses on pattern recognition to make predictions (e.g., spam detection). Conversely, Generative AI uses deep learning models to encode rules, thereby creating new content. Therefore, choose Predictive AI for probability optimization problems, and Generative AI for creative content problems.

How does Generative AI minimize "hallucinations"?

To minimize hallucinations, engineers often use RAG techniques. Instead of relying solely on the model's internal training knowledge, the system retrieves actual data from reliable sources before asking the AI to synthesize the answer, helping to ensure higher accuracy.

Why is Transformer Architecture the foundation of current LLMs?

The Transformer architecture uses a "Self-Attention" mechanism that allows models to process data in parallel and understand context relationships between words far apart in a sentence. Because of this, it is completely superior to previous architectures in processing large-scale text.

What are the security risks when deploying AI Agents in an enterprise?

Key risks include sensitive data leakage via APIs, the risk of Prompt Injection attacks to hijack control, and content copyright issues. Enterprises need to deploy isolation systems, strict API key encryption, and other security measures to ensure safety.

Why should businesses shift from a single LLM to Agentic AI?

A single LLM is just a passive "brain." Meanwhile, Agentic AI (coordinated AI agent systems) allows for the automation of complex workflows, including planning, task execution, and self-correction, helping to increase productivity far beyond simple chatbot usage.

See more:

- What is AIaaS? A detailed guide to Artificial Intelligence as a Service

- What is MCP Transport? Understanding the Transmission Mechanisms of the Model Context Protocol

- What Is an Orchestration Layer? Understanding Its Importance in System Architecture

In essence, Generative AI has redefined software architecture with superior creativity and data synthesis capabilities. However, to move AI from the test environment (POC) to real-world operations in the enterprise, establishing an Agentic AI team is the ultimate destination. This is a move from "an intelligent brain" to "a nervous system that automatically acts," helping businesses maximize the value of AI at production scale.