What is an AI-First Mindset? Benefits and Enterprise Applications

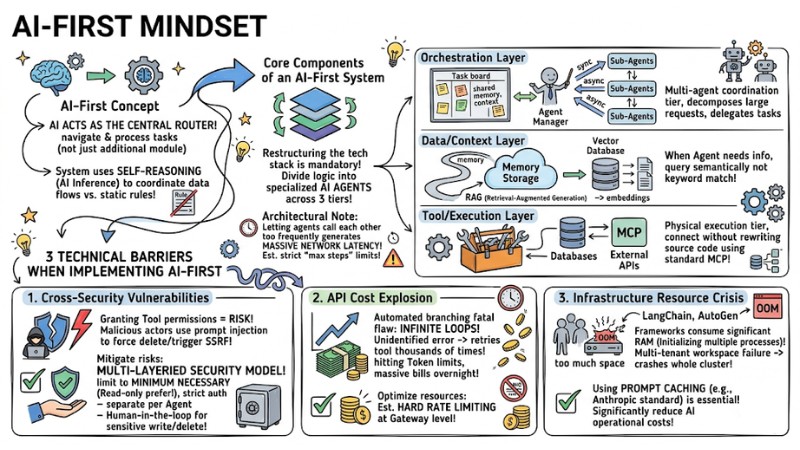

An AI-First mindset marks a shift from "adding AI" to a system to designing the entire software architecture around the reasoning capabilities of AI models from the very beginning. Instead of writing endless if-else branches to handle unstructured data, AI-native enterprises let the LLM act as the routing brain: receiving context, self-selecting processing flows, calling appropriate tools, and making decisions in near real-time. In this article, we will dissect the AI-First concept from a system architecture perspective, its core technical components, and a practical implementation roadmap for businesses.

Key Takeaways

- Definition of AI-First: Understand this as the shift from rule-based software to an AI Router architecture, where the LLM acts as the brain navigating data flows and deciding tasks instead of using static If/Else structures.

- Standard System Architecture: Master the 3-layer structure: Data Layer (RAG/Vector DB), Orchestration Layer (Multi-agent coordination), and Tool/Execution Layer (task execution via standard MCP protocols).

- Restructuring with Agentic Workflow: Replace manual error-handling processes with Agentic AI mechanisms, allowing the system to reason, plan, and test alternatives when exceptions occur.

- Core Risk Defense: Proactively block 3 critical risks: Prompt Injection vulnerabilities, cost explosions due to Infinite Loops, and OOM (Out of Memory) crashes caused by poor resource management.

- Enterprise-Ready Strategy: Implement Multi-tenant isolation (user/workspace data separation) and apply Prompt Caching to reduce API costs and latency by up to 90%.

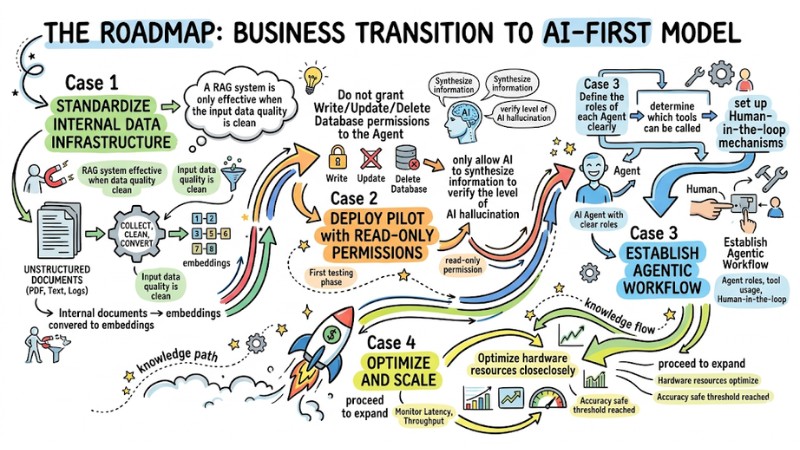

- 4-Step Execution Roadmap: Start from data standardization (Clean Data/RAG) -> Read-only testing -> Establishing Agentic Workflows -> Scaling the system with Human-in-the-loop (HITL) mechanisms for sensitive decisions.

- FAQ Resolution: Get answers to common questions regarding the AI-First mindset.

AI-First Concept from a System Architecture Perspective

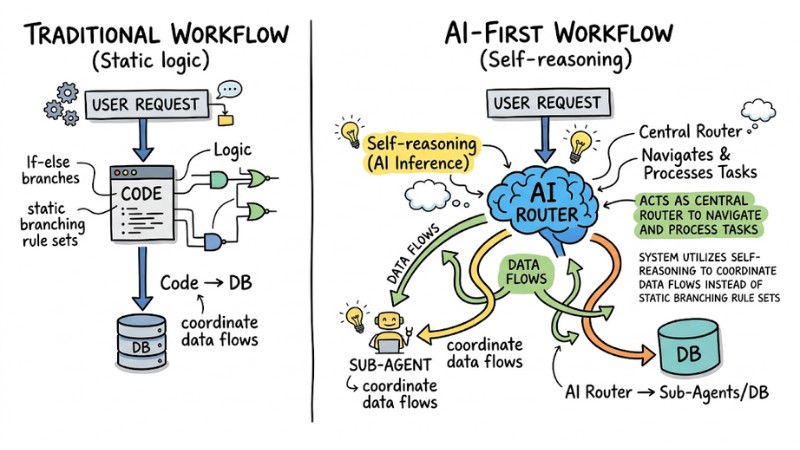

An AI-First architecture is a software design model in which Artificial Intelligence (AI) acts as the central Router to navigate and process tasks, rather than just being an additional module. The system utilizes self-reasoning (AI Inference) to coordinate data flows instead of static branching rule sets.

AI-First Architecture Diagram

Restructuring Processing Flows with Agentic AI

In traditional rule-based architecture, processing flows are "pre-drawn" by engineers using If/Else or Switch-Case blocks for every specific scenario. The application receives a request, branches according to static logic, and kemudian queries the Database. If the input data deviates even slightly from the defined Regex, the system easily triggers exceptions and breaks the entire processing flow.

With an AI-First mindset, control flows are redesigned towards Agentic AI - an architectural trend pushed by entities like OpenAI and Andrew Ng. In this model, the application acts as a Gateway, feeding the user's raw request into an AI Router. Based on context, the language model automatically decides which. API to call, what parameters to extract, and how to format the result, significantly increasing fault tolerance with unstructured data.

Core Components of an AI-First System

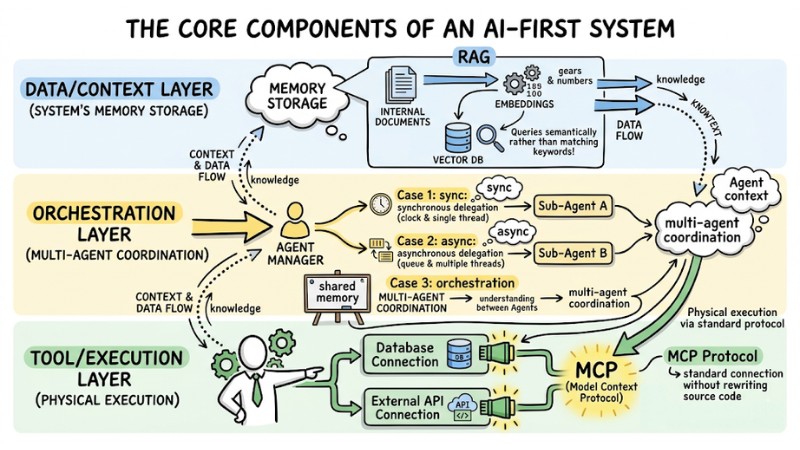

To build a complete. AI Agent-oriented architecture, restructuring the tech stack is mandatory. An AI-First process automation system is typically divided into 3 clearly separated layers.

Instead of cramming all logic into a Monolithic block, features should be broken down into specialized AI Agents operating across 3 architectural tiers:

- Data/Context Layer: The system's memory storage. Uses. RAG (Retrieval-Augmented Generation) combined with a Vector Database to convert internal documents into embeddings. When an Agent needs information, it queries semantically rather than matching keywords.

- Orchestration Layer: This is the. multi-agent coordination tier. It provides a common task board (shared memory) so Agents can understand each other's context. An Agent Manager decomposes large requests into small tasks and delegates them to Sub-Agents via synchronous (sync) or asynchronous (async) mechanisms.

- Tool/Execution Layer: The physical execution tier. To connect Agents with Databases or external APIs without rewriting source code, modern systems utilize the standard MCP (Model Context Protocol).

Architectural Note: When designing multi-agent systems, letting agents call each other too frequently generates massive network latency. Therefore, you must establish strict "max steps" limits.

The core components of an AI-First system

Below is a pseudo-config for an MCP setup allowing an Agent Manager to delegate a data analysis task to a Worker Agent:

// Configure Agent Workflow routing via the MCP protocol

{

"orchestrator": "manager_agent_01",

"task": "analyze_q3_revenue",

"delegation_mode": "async",

"sub_agents": [

{

"name": "sql_worker_agent",

"mcp_tool": "db_query_tool",

"permissions": ["READ_ONLY"],

"context_window": "shared_memory_id_992"

}

],

"fallback_policy": "terminate_on_error"

}

3 Technical Barriers when Implementing AI-First

When implementing an AI-First enterprise model, systems lacking infrastructure preparation often face 3 critical risks:

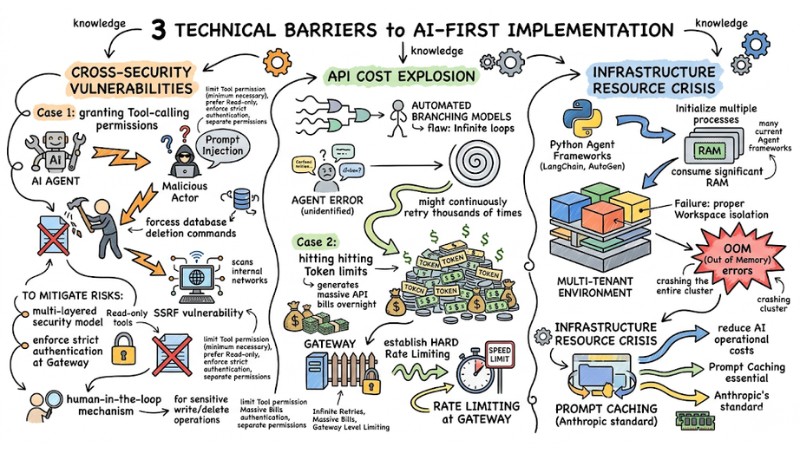

1. Cross-Security Vulnerabilities

When granting Tool-calling permissions to an AI Agent, malicious actors can use prompt injection techniques to force the Agent to execute database deletion commands or trigger SSRF vulnerabilities to scan internal networks.

To mitigate risks, the system needs a multi-layered security model: limit Tool permissions to the minimum necessary (prefer Read-only), enforce strict authentication at the Gateway, separate permissions per Agent and access channel, and mandate human-in-the-loop mechanisms for all sensitive write/delete operations.

2. API Cost Explosion

Automated branching models have a fatal flaw: Infinite loops. When an Agent encounters an unidentified error, it might continuously retry a tool thousands of times, hitting Token limits and generating massive API bills overnight.

To optimize resources, you must establish hard Rate limiting at the Gateway level.

3. Infrastructure Resource Crisis

Many current Agent frameworks written in Python (like LangChain or AutoGen) consume significant RAM when initializing multiple processes. In a Multi-tenant environment, failure to provide proper workspace isolation can cause OOM (Out of Memory) errors, crashing the entire cluster.

At this point, using Prompt Caching (e.g., Anthropic's standard) is essential to significantly reduce AI operational costs.

3 technical barriers to AI-First implementation

| Criteria | Unoptimized AI-First System | Enterprise-Standard Architecture |

|---|---|---|

| Access Security | Default Write/Delete permissions. | Read-only permissions, AES-256-GCM encryption. |

| Context Handling | Sends full data with every API request. | Applies Prompt Caching to reduce tokens by 90%. |

| User Isolation | Uses a shared Agent instance. | Fully independent Multi-tenant (Separate Workspaces). |

| Operating Costs | RAM overload, uncontrollable API bills. | Strict Rate limiting, low resource consumption. |

Roadmap to Transitioning to an AI-First Model

To integrate an AI-First mindset into a business strategy without breaking core systems, engineering teams should follow a rigorous deployment process:

- Standardize Internal Data Infrastructure: A RAG system is only effective when the input data quality is clean. You need to collect, clean, and convert unstructured documents (PDF, Text, Logs) into vector embeddings.

- Deploy Pilot with Read-only Permissions: In the first testing phase, absolutely do not grant Write/Update/Delete Database permissions to the Agent. At this stage, only allow AI to synthesize information to verify the level of AI hallucination.

- Establish Agentic Workflow: Define the roles of each Agent clearly, determine which tools can be called, and set up Human-in-the-loop mechanisms.

- Optimize and Scale: Once accuracy reaches a safe threshold, proceed to expand. Monitor Latency, Throughput, and optimize hardware resources closely.

The roadmap for transitioning to an AI-First model for businesses

Frequently Asked Questions about AI-First

How is AI-First different from Mobile-First or Cloud-First models?

AI-First is not just a delivery platform, but a shift in control flow. While Mobile-First prioritizes the interface, AI-First prioritizes the automatic reasoning capability of computers to make decisions instead of waiting for hard logic (if-else) from humans.

Why does AI-First implementation cause API cost explosions?

In a Multi-Agent architecture, agents frequently call each other to exchange data. Without strict control or Prompt Caching, re-sending the entire context in every request will drive the API bill up many times higher than expected.

What are the most common security vulnerabilities in AI-First?

The most dangerous vulnerabilities are Prompt Injection and SSRF (Server-Side Request Forgery). When an Agent is granted access to tools or databases, an attacker can manipulate commands to make the Agent perform unintended actions, seize control of internal systems, or extract data illegally.

How to ensure user data does not leak?

To isolate data in a Multi-tenant system, you must implement Per-tenant/Per-workspace isolation. Every API Key must be encrypted and subject to strict access policies from the Gateway down to the execution rights of each individual Agent.

What role does MCP play in AI-First architecture?

MCP (Model Context Protocol) is a standard protocol that helps AI Agents connect to external toolsets and data without needing to modify source code. It acts as a unified "connector," allowing the system to expand flexibly following a modular standard.

Read more:

- What is Fine-tuning? Benefits and Applications of AI Refinement

- What is Natural Language Processing (NLP)? Roles and Real-world Applications

- What Is Generative AI? Real-World Applications of Generative AI

AI-First is not just a new tech slogan; it is a way to rethink the entire software control flow, empowering reasoning models to make decisions instead of rigid if-else blocks. When implemented with a proper architectural standard (RAG, multi-agent, MCP, security, and cost optimization), AI-First helps businesses build more flexible, fault-tolerant systems that are ready to scale without sacrificing data safety or infrastructure budgets.